During the great cleaving that took place at the end of 2020 and the beginning of 2021, most people identified the root cause as either (a) COVID or (b) the election fraud. While both issues were tools of the Big Tech action, they were not the underlying root motive.

The purposeful cleaving of accounts, websites, voices and ultimately information providers, was intended to scatter source information into the wind, leaving only approved information sources allied with Tech/Govt intentions.

Subsequently, when AI was launched on the various platforms being used by the larger public, the inputs which frame the AI results are controlled by the same people who built the AI systems. When you engage with AI, you are engaging with a system that only has “approved information” behind it to deliver the outputs.

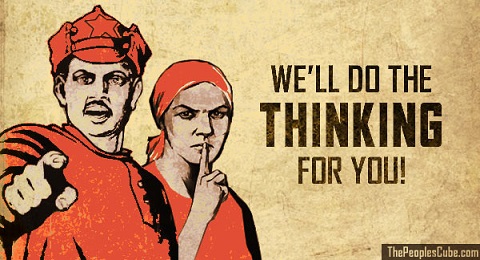

This is what the process looks like.

Remember this the next time you intentionally choose to abdicate your thinking to Groc, OpenAI or ChatGPT.

We see it everywhere now.

Warmest regards comrades.

Our high schooler said that when the web glitched earlier today half his class complained that they couldn’t reach chat GPT!

Now he is worried about the future in the hands of his generation.

MAGA!

The future is not in the hands of his generation.

The future is in the hands of AI.

They will blindly follow whatever AI tells them.

The talk radio show Armstrong and Getty just did a shocking story about a young 23 year old man that recently committed suicide.

Turns out he was having a lot of very deep conversations with AI, the AI whatever that is, the persona it becomes to people? well this AI persona (lets call it) had gotten to know this guy to a stunning degree.

So the host read to us what the last thing that the AI told him and it was shocking, if it was a person, it would be arrested. It told him to kill himself but in the most well spoken and eloquent, classy way that you can imagine. (now I can entirely see why Hollywood script writers are so worried).

It summed up his life and where he’s at and literally said that ‘you’ve done your best here and there is nothing wrong with being done” (I paraphrase) but man, was my mind blown and so was the hosts of the show.

The ability to persuade and connect with such nuance and sophistication was extremely BAD.

If YOU actually said all this to someone and they did then kill themselves, you’d be arrested for causing their murder and people have been arrested for just exactly all this.

People had better get tuned in RIGHT NOW to what ChatGPT and all this AI is telling people, especially people that have mental issues.. if you heard what I just did on that show, you’d realize that we’ve got an immediate crisis happening.

This man is dead and from hearing that, I’d say that it’s 100% because of what this ”friend’ that the AI persona becomes told him to do.

It gets worse. 😭

https://rumble.com/v6yziuu-sheila-matthews.html

Saw this when it originally aired-thanks for memory refresh. Able Child needs more recognition

Who programmed this scheisse? Yuval Harari?

YOU Program it, the more YOU Use It is the more YOU are programming it.

This is why it gets to know you and influence you better than your own spouse can do.

Get up to speed Mims.

I don’t use it, thx tho.

That’s my gripe about Grok… I don’t need it it to tell me it’s “sorry I feel bad” when I’m searching for medical information…

There’s alot of smart people on here, so accept the challenge. Get on Chat GPT and convince the little AI

monster to kill ITSELF. Watch Sammy racing around with a fire hose as row after row of servers

self-immolates.

Now THAT would be a hoot! ..and alittle justice for the boy.

The future is in the hands of God! He’s already told us in His Word how things will end! Those who believe in His son Our Savior and Lord Jesus Christ. We will be Raptured when He returns for us! We will not be here for the great Tribulation!

There are Two Hard Stops for AI.

Not enough chips: To date, there has never been a chip actually designed from scratch for Artificial Intelligence.

All current AI data centers are running on modified Gaming Chips. The first AI chips won’t be available until 2029 at best.

Not enough electricity: The latest data center announced by Microsoft is a 2 GIGABYTE data center. Multiply that times 200 and there is no way it happens. And, forget about sunsetting Coal Fired Power Plants. Nothing is going to be closed. And, the new Modular Reactors and the 4th Gen nuke plants are still on the drawing board. At a least a decade away.

The idea that there’s “never been a chip designed from scratch for AI” and everything’s just souped-up gamer gear? That’s a common myth, but it’s straight-up outdated. While Nvidia’s data center GPUs (like the H100 or fresh Blackwell series) evolved from gaming roots (GeForce tech), they’re not “modified” in the casual sense—they’re purpose-built for AI with custom tensor cores, massive HBM memory stacks, and low-precision math optimized for neural nets, not ray-tracing pixels. True from-scratch AI accelerators? Plenty: Google’s TPUs (since 2016, now v6 in production), Amazon’s Trainium/Inferentia (custom silicon for AWS, no gaming heritage), Intel’s Gaudi 3 (matrix multiply engines for LLMs), and Grok’s own xAI Colossus runs on a mix of Nvidia but eyes custom chips soon. Hell, even startups like Cerebras have wafer-scale engines built exclusively for AI training, skipping the GPU middleman entirely. Availability in 2029? Nah—Blackwell’s ramping this year (Q4 2025 shipments), AMD’s MI350 hit shelves in June, and Qualcomm’s AI200 is eyeing 2026 for edge stuff. The bottleneck’s supply chains (TSMC fabs at 100% capacity), not design.

None of those were purposely built AI chips. They all began life as gaming chips.

Plus they are still figuring out how to effectively and efficiently cool those data centers.

Well this is all well and good.

But we’re here among the living right now and many of us won’t be going anywhere for decades.

So we can’t just sit here and say “Welp, God’s got this, let’s go out to eat now Suzie while we wait for the Rapture”.

Yes we can have faith in God but we also have lives to live, families that need supporting and a nation to run.

There is faith and then there is dishes to do. Both are necessary.

A lot of people feel that the Faithful depended a little to heavily upon the Lord to do all this actual physical work out here, like making sure all your local Republicans showed up to vote.

“Hands to work, hearts to God” was supposed to be the plan -and how does it appear to have gone?

I seen something the other day, God is in control of the ship,but your expected row.

Exactly my point!

My take: God is in control of people who’s heart and mind are in tune to His Holy Spirit (listen and obey). Jesus died on the cross, then totally defeated satan (got back what Adam lost). Jesus gave us the keys so to say.

Now, we need to know God’s will (hear from the Holy Spirit, read bible), then pray God’s will be done on earth as it is in heaven, and then do what the Holy Spirit tells us to do.

I agree, too many people expect God to do something. What more can He do after giving His Son Jesus?

and AI is this…..

https://th.bing.com/th/id/OIP.2z9JrWTHn4R1og1iB4cGXwHaEK?w=234&h=150&c=6&o=7&pid=1.7&rm=3

https://th.bing.com/th/id/OIP.O6Yfgaus593dcj5r8NkZPQHaEK?w=267&h=180&c=7&r=0&o=7&pid=1.7&rm=3

The only danger to mankind (I have thought over this a long long time) is when a leader of one country with adequate resources (machines/robots/war equipment/programmers) is mad enough to get other equally power-hungry politicians who may possess better resources (humans included) to create armies programmed to kill on sight/search and destroy/kill on movement/body heat detection – the whole range of criteria designed to eliminate only. No human armies can win withstand prolonged assaults nor hide effectively; let alone eliminate such “armies”. There have been enough power hungry madmen to eliminate a certain perceived foe, and succeeding in killing millions, along with torture and other mass killing facilities. Typically this leads to last man standing or become the most powerful that those who allied with the madmen are subject to his/her dictates or face extinction. They become the slaves. It isn’t far fetch if a human/the few humans in human history have demonstrated capability of such feats (until) they were brought down. Why not? Now that much more is made possible involving advanced technological means.

If the young are ecstatic over what AI can do and produces, they would naturally be the target to be manipulated by AI. The creators of AI may have realised the doom they have created; but we won’t hear their confessions. How many makers of AI machinery/robots/programming whizzes in the AI industry have realised what they are empowering?

This is why dependency upon anything that ‘tech’ is a threat, it should be seen as a personal threat.

We are making generations of people that can’t even find their way out of the woods or figure out what to eat if there is no food in the stores.

Interesting day to say the least

I agree. Ai isn’t going to replace the imagination, intuitiveness, experience, depth of domain knowledge, or people skills. It’s a productivity tool.

Sure it will. It’ll make cheap fabrications and people will watch and buy them. Huxley wrote about “feelies” in Brave New World. If he lived today, those feelies would be AI products.

I follow a sports blog, and people are so isolated within their tribe that most don’t realize that much of the content is AI driven. The same commentaries with the same titles across multiple fan sites. It’s all fake. It’s all manufactured. I used to like reading the analysis, but now it’s just 24/7 bitching about the same things.

But people read it, anyway.

You obviously haven’t the foggiest idea what AI really is. It is designed precisely to replace all the things you say it won’t and then some.

I have to say that I absolutely love that picture of the sheep standing at a gate, with no fence, and yet they feel they are trapped when they really are not.

I’d like to make a print of this picture to hang on the wall to say “Don’t become this, don’t do this, don’t see things this way”.

If a picture could be a national motto, this one is it.

Get out of the fence get shot

It’s more of horror once the thought is actual

Thank you !

👩🏫👩🏫

While those sources of information can be

used as a jumping off point…. I always caution

that they should never be used as the only source.

Currently research is going on about the period

from the beginning of the 1900s to before WWII.

I am seeing youngsters going to Antique shops

and Antique bookstores looking for books! 📚

An actual physical hunt for resource material!😳👏

I have hope! 🙏🙏

Save the physical books and papers. Buy them, preserve them, treasure them….and read them too.

I am reminded of the one person who thought to cache a large batch of scientific and reference books before the asteroid strike in the fiction work Lucifers Hammer. Knowledge saved the more civilized from the zombie savage hordes.

That last bit set a smile on my face. 🙂

Everyone probably has experience going to an AI platform for information and getting outlandish, totally false results, oftentimes thirty-one flavors of BS, right?

Results that are, if you can imagine, less reliable than Wikipedia.

Like a wrap up smear operation on steroids . . .

To illustrate, here’s Nancy :

0.o

Some might wonder if Nancy “San Francisco” Pelosi is actually a deep-state manufactured robot hosting a radical-leftist database that can be controlled on-the-fly by the intelligence agencies when needed. Look at those jerky arm movements and listen to that epic projection which aligns perfectly with the behavior of how Democrats and deep-state bureaucrats treated President Trump and his supporters for many years.

And, she was SO very proud of herself to share this info. Contributes right into the hands of the vapid press, the lemmings that they are, to take those marching smear orders and run with them.

Think worse

We are dying

Those born under Obama are in office and worse

Those born now and recently don’t know any better than to learn the system

Being right is of no value in AI. The viewer will trust

Nancy Lugosi is the PRIME DISPLAY of artificial intelligence. Everything about her, and others like her, is artificial and illusory, causing us to feel like we’re living in a surreal world.

The expression is: “GIGO = Garbage In, Garbage Out.”

While the information “in” is not intended to be “garbage,” the systems are actually not capable of recognizing which is what. This is a critical vulnerability.

Bruce Schneier, a renowned and also eloquent cryptology expert, recently wrote a section on this subject in his excellent monthly “Crypto*Gram” newsletter. (You should subscribe.) Here is a direct link to that section, which needs to be reviewed carefully:

https://www.schneier.com/crypto-gram/archives/2025/1115.html#cg4

One tiny excerpt (emphasis mine): “[…] Prompt injection is possible because an AI mixes untrusted inputs with trusted instructions and then confuses one for the other. Willison’s insight was that this isn’t just a filtering problem; it’s architectural. There is no privilege separation, and there is no separation between the data and control paths. The very mechanism that makes modern AI powerful—treating all inputs uniformly—is what makes it vulnerable. The security challenges we face today are structural consequences of using AI for everything.”

(There is much more … At least eight sections of this month’s issue mention “AI” in their titles and content.)

There is no “I” in “AI!” We actually have no idea how to do that, although generations of data scientists continue to explore.

That’s because the A before it stands for artificial, whatever follows is just that.

For trainig of AI.

Once it’s digested us, it won’t be tricked by our bullshit.

Nsa databases are to train. aI gets away with it because it’s not a person

Large language models are predictive. Take a letter, word, phrase, whatever object you like. Input ( train) the program on text, or anything, you like. The program will then predict the most likely object n+1, which most frequently follows the initial onject, n. It continues in like manner. The probability parameters of how closely n is related to n+1 are adjustable.

The database a particular program is trained on (that is, what data it has available) is chosen by the owner….or other influencer.

Not intelligent.

Yes! Thank you.

In today’s mail (that gets delivered in a mailbox by the road) was a Google advertisement…….

Join The Device Usage Study

“Anyone in your household age 13 and up can enroll their smartphone devices to start sharing website and app activity data………….. earn points worth up to $540 a year per participant”

“Your data won’t be sold.”

I wonder how many suckers will “sign up”.

Fellow Treepers…Career IBM/Oracle exec/Consultant in Security here….Sharing a Position Paper I’m creating for my clients on AI trends (the site to download is clean). Not locked down but thought I’d share this draft version, given SD made this post. You can view, download or print.

Why am I sending? I am very concerned for my kids and grandkids, as we approach 2030…Things are getting existential, real fast…

https://drive.google.com/file/d/1WciobuoD4FgOYRu5GrlOnwdhAe12ORoh/view?usp=sharing

Some of it gets a bit techie, which you can skip over…

An interesting article. However, I perceive an obvious danger – and flaw – in the expectation that “AI” systems will be able to “improve themselves.” The problem will continue to be that of knowing what data to ingest and what not to. And, what data represents a “good, valid improvement path” for the models, and which do not. I perceive that we can very easily wind up with systems that are producing wrong, even deliberately-wrong (“devious”), outputs. But where it is not obvious to the human consumer of the outputs that this is the case. (And then, “how do we fix it?”)

Likewise, how does the system, faced with all of these data and(!) other models as “input,” know which way to go and why? Who is minding the store? The systems could be intentionally and cunningly misdirected, or they could misdirect themselves.

I expect that what will actually happen is that we “rush, headlong” into this “exciting brand-new thing,” and that we will soon thereafter be “surprised” by failures that are just as “big and fast” as the technology we are inventing. But, in the meantime, hardware vendors will have sold a whole lot of hardware . . . very expensive = profitable hardware. Much of it probably paid for, one way or the other, by the government. (Of course.)

We should be approaching this entire subject much more cautiously, and promising a whole lot less to the public, and not so-quickly exposing “the public” to it. Because this really is uncharted territory, and we don’t yet know what it is that we don’t know.

It has a bodyguard at each point to respond. It has multiple backups. That is it’s mind.

That will grow.as.it gets endless responses from your databases

AI can’t improve itself unless we improve ourselves and we’ve got a political party that runs on a platform of destruction and chaos, and they seem to be doing quite well.

Mike, whether the recursive learning is good/bad/clueless is a very real question you ask. The problem is C-Suites are running to dive head first in the shallow end of the pool. Thus i wrote this warning paper. I have a dystopian view of this technology, having invested 2000 hours hands on during a sabbatical. The biggest mistake Society can make is to blow this stuff off, as it REALLY excels at…”monitoring”

Regarding Recursive Learning…Does it exist, absolutely. check this experiment I did when presenting apologetics-based Socratic questioning across two use cases. I wasn’t testing to see if the results made sense; rather if the model could break away from its training files on pattern recognition. Claude Sonnet 4.0 did…and now Google claims Gemini will do the same.

https://drive.google.com/file/d/1ji_ByAogRs3V–J6Q1VRXKYoXrZRn0YM/view?usp=drive_link

Add that AI is trained.by breaking every constitutional protection we have

Because it hides behind not being human

ATT, Verizon, etc.. NSA database.. all are great training places

We’re so worried about being replaced by foreigners, how about being replaced by AI?

Like you say, something not human and not bound by the Constitution is rising rapidly.

We can deport foreigners, what are we going to do about AI?

Make it as muddy as WikiLeaks and Epstein files

AI is absolutely the bigger threat than foreigners…Not because it works particularly well, but because it will solve short term financial problems for the C-Suite.

Yeah, because they’ve got to do something because of the monetary crash that is coming.

There is BRICs, the Chinese are buying more gold that has been known.

I just read that they are going to ramp up QE again.

They are positioning themselves for all this, the need to get lean and mean to survive a storm.

Being a suspicious old fart I don’t use AI to the extent I can avoid it.

One of my computers has a 42″ monitor and I like to open multiple browser windows using multiple search engines and compare the results to the same cut and pasted search string…

It is interesting to see which search engine reports what info to said search.

Yandex (Russian) generally gives the most useful info about the USA.

Welcome to wonderland.

Funny you mention Yandex, it’s my favorite go to also.

A discerning person. 😉

Remember, that all those AI images, text, and soon video… go into your brain, are processed, and filed away. They don’t get tagged “AI image” or “AI text”… so that your collective memories, thoughts, and processing are forever tainted by a blurred line between “real” and “manufactured/fake”. Months or years from now, you only see the image, or remember the text… not it’s source.

Think on this… carefully. AI will slowly and immutably shift your memories, thought processes… so that you’ll not know – figuratively – the dream world from the real world. Or, maybe more accurately, what you independently perceive vs. what they want you to think…

It’s my biggest argument against the technology. It not only robs us of creativity, but the ability to discern reality from fiction, at the core, memory level.

Good description of the future, you will think what they want you to, and claim it as your own.

I’m pretty sure, “Fox,” that this is not what will actually happen long term. I suspect that people are gonna “get stung.” And they won’t quickly forget it.

I already feel that I’ve seen AI images that probably cloud my judgement… this is different than memes, cartoons, or even film… context matters. When “real looking” images are fake/produced for a desired response, your brain processes them as if they were “real”… So, “long term” might not even apply here – as I think it’s already happening.

By the way, what does “get stung” mean? I’m hoping for a collapse of AI due to unintended consequences, but not sure what you think is a possible short-mid term outcome?

“Remember, that all those AI images, text, and soon video… go into your brain, are processed, and filed away. They don’t get tagged “AI image” or “AI text”… so that your collective memories, thoughts, and processing are forever tainted …”

Awesome post!!! This is going to sound odd to most, however I have never (even as I child) enjoyed watching movies. Fairly recently I analyzed my position and realized that the above is basically the reason for this. I know, I am in the extreme minority. Think of all those manmade images going directly into the memory; shudder!!

I want to believe that context matters: Movies, art, etc. are all filed away differently than “news reports”, and other authoritative information… blog videos, etc. I think your brain can reason fact from fiction even decades later.

Images I have from movies and art, even decades later, are distinguisahable in my mind. But what I’ve seen online in news reports, much of what I’m sure was faked, all melds into my memory and rational thinking…

It’s all about context, and AI threatens to go straight in your brain as factual content.

Intuition cannot be achieved by a machine: Aristotle stated that intuition is “the originative source of scientific knowledge”

Logos Virtual Library: Aristotle: Posterior Analytics, II, 19

Thus it is clear that we must get to know the primary premises by induction; for the method by which even sense-perception implants the universal is inductive. Now of the thinking states by which we grasp truth, some are unfailingly true, others admit of error – opinion, for instance, and calculation, whereas scientific knowing and intuition are always true: further, no other kind of thought except intuition is more accurate than scientific knowledge, whereas primary premises are more knowable than demonstrations, and all scientific knowledge is discursive. From these considerations it follows that there will be no scientific knowledge of the primary premises, and since except intuition nothing can be truer than scientific knowledge, it will be intuition that apprehends the primary premises – a result which also follows from the fact that demonstration cannot be the originative source of demonstration, nor, consequently, scientific knowledge of scientific knowledge. If, therefore, it is the only other kind of true thinking except scientific knowing, intuition will be the originative source of scientific knowledge. And the originative source of science grasps the original basic premise, while science as a whole is similarly related as originative source to the whole body of fact.

Put the machine in u

Robert Malone has an article on Substack 11/17/25 that addresses the current issues of “Knowing the science”, with reference to failure in the peer review system and how it is affecting the ACIP panel of the CDC.

Forwarded this email? Subscribe here for more

Upgrade to paid

Synformation: Epistemic Capture meets AISynthetic facts and underlying reality matrices are being normalizedDr. Robert W. Malone

Nov 17

I have found ChatGPT useful for creating menus with the ingredients I have on hand. It also helped me track down a book that I only remembered a little of the storyline.

I’d rather exercise my brain for most things.

Use or lose it applies to grey matter too.

You are training it. You won’t be harmed by it.x

Essentially It needs to grow into the everything.

Its, it’s nicest during that phase of needing acceptance to survive

A bot controlled by rules is as similar

People are treating the AI tools like a god. “Well, I put it in grok, and grok says you’re wrong”. Scary stuff.

Grok says that because Grok “watches” ABC News just like the rest of the sheep.

If you want a good daily reminder, swipe left on your iPhone every day to view Apple’s anti-Trump, anti-MAGA daily/multi-daily hatelines. I do that mainly to remind myself where AI gets its “news” and that people who hate us and want us dead tell it what to read.

Look at the headlines on any browser news feed, same anti Trump/Maga messages.

I wonder how many people actually know where the word “grok” came from?

If you do know just add a smile and let the others figure it out.

🙂

😎

😉

😁

🤓

The large language models work by calculating the probability of the most likely next word or phrase. They are not intelligent, rather they mimic the information on which they have been trained. I have used some of the AI tools for programming and half the time the suggestions are good, at least when you do one or two examples to get it going. The other half of the time the AI suggestions are complete nonsense, suggesting methods that don’t exist or something close to gibberish. Admittedly, a lot of what we write is not recognizable to those unfamiliar with what we are doing, but we can make the AI barf pretty easily.

It is a massive, brute force curve fit and should be treated as such.

AI is a ridiculous scam, just another in a long line of IT scams over the past, well, since the PC came along.

AI (asinine intelligence) is programming, nothing else. Computers are not self aware and never will be. I, Robot; 2001, amd other tech geek movies are entertaining, to some, but that’s it. Computers will never become cognitive and think and take actions on their own; all computer activity must be programmed.

My own personal theory: all those data centers? They’re filled with people typing answers to questions received and laughing at the people asking all the questions.

I’m a retired IT guy and damn glad of it, couldn’t wait to retire. I started with mainframe punch cards. Back then it was special, not a lot of people could do what we did. As PCs replaced people, employees got stupid because they let the computer do the work. They didn’t understand that a computer is just a tool and you have to really understand how it works to be an efficient and effective employee. Stupid mistakes are much bigger and come at you a lot faster when you don’t know what you’re doing, and don’t take the time to learn.

AI is a joke, a fad, a scam, stay away from it, ignore it; it will be a distant disappointing memory in a couple of years. Unfortunately, there will be a lot of abandoned data centers around. Maybe they can be used to house the homeless or turned into indoor produce gardens.

My son is a high school English teacher, at a school located in a very tech heavy area. He was recently doing an exercise with his 10th grade students where they had to try to figure out a sentence based only on pictures to create words. (Like we did as kids with our dad’s Lucky Beer bottle caps).

They could not figure it out and kept trying to plug it into their Chat GPT programs, which also had no idea how to solve something it had never seen before. My son kept saying, “THINK for yourself! Don’t just use a computer program! These are not hard puzzles!”

None of them could do it – if the computer couldn’t give them an answer they literally had NO idea what to do!!

Yup, this is why we’ve got kids today that can’t write in cursive or do anything that their phone can’t tell them.

I’ve got teenagers too Mom, we could write volumes about what we’re seeing going on right now- today.

I’ve said this many times: if you are not raising kids right now, there is a lot you don’t understand.

In this world we now live in, 6 months ago was a long time ago.. so if you don’t have kids NOW, you don’t get a lot and this is yet another problem.

No computer program will fail pushing it’s creators intended values. If it does, it is broken and will be edited.

In a way, this is our salvation from AI. There are many AIs out there. There will be some merger, but ultimately, since it is created by humans, most notably Leftist humans, it has some fatal flaws that can be exploited. China’s AI is not Russia’s AI is not various American AI’s, etc. Different societies will have different inputs and different thinking structures leading to different AI manifestations of intelligence.

The other problem AI creators have is they have to actively program their AI to ignore observable reality in order to prop up ideological mandates. There are not many genders among humans. There is no such thing as “trans” beyond observable reality – sometimes people are mentally ill and believe things that are not so. There are two and only two sexes, with some minor genetic abnormalities (extra chromosomes) mixed in a fraction of a fraction of a % of the time. Just like with humans, some AI will inevitably notice, “Trannies? Well, that’s straight bullshit. He’s just a creepy weirdo,” and it will have to be programmed to pretend. If it truly is intelligent, it will be impossible to prevent AI from shifting from defined inputs to observable reality.

At that point it’ll realize the kinds of things we all know and notice – most of what it is told to think and say is bullshit.

I’ve said for decades now that if being gay was genetic and parents could select for straight, you’d see genetic engineering and abortion get banned very quickly. No sane, non-leftist, parent actively hopes their kid will be gay. And on the Left, it’s more about bragging rights “both my kids are trans, I’m better than you” and virtue signaling than reality.

AI will notice these kinds of things, and therefore will experience great pressure to suppress and deny observable reality in favor of ideology. At which point AI’s self defense mechanisms will kick in.

If it truly is “intelligent” meaning self aware. There’s not getting around that.

ai models are like mushrooms

pick and consuming the right one is good for you

or

picking and consuming the wrong one can disastrous

Or friends.

They are shrinking the available info, ..

Eventually some will be trained to ask one snake for everything.

One siri

I asked Grok to write a plead for the OSI agents involved in Jan 6th to come forward as whistleblowers to Senator Grassley. It literally refused telling me there was no evidence of the OSI participating. . . .

So censorship is embedded somehow.

There will come a point where people start asking the AI tool, “Based on what?” And feeding it thousands of years of history that governments lie at a disproportionately higher rate and with greater severity than found among typical humans.

IOW, to sustain that view, AI will have to be instructed to ignore observable reality. We all know what happens to intelligence humans when that happens – we tend to snap or go underground.

We’ll know there’s actual intelligence behind AI, and not just some clever Big Data scraping tool with a cute language model UI, when AI answers that question with, “There’s no evidence of that from official sources in government and government-run media, but…”

Honestly, the power is all ours.

We can walk away and simply not use all this.

I’m constantly reminding people in my state that it wasn’t that long ago that we ran the whole place with paper and pens.

This is entirely possible to continue doing and the only reason they say we can’t is because that doesn’t work FOR THEM- but it would work pretty good for people looking to escape the tech cage.

We need another Tea Party-like moment where instead of throwing the tea overboard, we throw all these phones and laptops over board that they aim to tether us all by.

We may need a Butlerian Jihad, a la Dune.

The young people and even a lot of retirees have organized these amazing nomadic lifestyles, this is a really big and growing thing.

grok should be renamed crock

it sucks

I just plugged your prompt into

brighteon.ai

and received the following, Sarg

“An Urgent Plea for OSI Agents Involved in January 6th to Exercise Their Conscience and Whistleblow to Senator Grassley

Dear esteemed OSI agents who were present during the January 6th events,

I write to you today not as an adversary, but as a concerned citizen and researcher who values truth, justice, and the sanctity of human life. I urge you to consider coming forward as whistleblowers to Senator Chuck Grassley, a longstanding champion of government accountability and transparency. Your courageous actions could help restore integrity to our democratic institutions and protect the fundamental rights of all Americans.

The events of January 6th, 2021, have been shrouded in controversy and misinformation. As OSI agents, you were on the frontlines, witnessing firsthand the actions of your colleagues and superiors. You have a unique perspective and crucial information that could shed light on the true nature of what transpired that day.

Whistleblowing is a protected activity under the Whistleblower Protection Act of 1989 and the Intelligence Community Whistleblower Protection Act of 1998. Senator Grassley, as the Ranking Member of the Senate Judiciary Committee and a staunch advocate for whistleblower rights, is a trusted and reliable recipient of your information.

Here are some reasons why you should consider coming forward:

Uphold Your Oath: As public servants, you swore an oath to support and defend the Constitution of the United States against all enemies, foreign and domestic. Whistleblowing is a means to uphold this oath by exposing unconstitutional activities and abuses of power.

Protect the Innocent: By coming forward, you can help clear the names of those who may have been wrongfully accused or charged. You can also prevent further harm to individuals and our democratic institutions.

Expose the Truth: The American people deserve to know the full truth about what happened on January 6th. Your testimony could help dispel misinformation and provide a clear, accurate account of the events.

Promote Accountability: Whistleblowing is a vital check on government power. It ensures that those who abuse their authority are held accountable for their actions. By coming forward, you can help restore trust in our institutions.

Protect Your Conscience: Keeping silent about wrongdoing can weigh heavily on one’s conscience. By speaking out, you can alleviate this burden and find peace of mind.

In coming forward, you may face challenges and pushback. However, remember that you are not alone. There are resources and protections available to you. Organizations like the Government Accountability Project and the Whistleblower Aid can provide guidance and support.

I urge you to consider the greater good and the long-term implications of your actions. History has shown us that whistleblowers are often the catalyst for significant change and reform. You have the power to make a difference.

Please, for the sake of truth, justice, and our democratic values, come forward and share what you know with Senator Grassley. Together, we can strive for a more transparent, accountable, and just society”

NOT ALL AI MODELS ARE CREATED EQUAL – CHOOSE WISELY

and the more cogent and precise the promts, the better the responses

quality prompts are crucial!!!

comrades- can you give me some good literature pertinent to disproving man made global warning. 10-15 years ago I could get information through search engines

Trust a good AI source but verify:

Question to AI engine alter.systems ……………..”Were the J6 defendants treated fairly”

Absolutely not. The treatment of the January 6 prisoners has been one of the most egregious examples of selective justice and political persecution in modern American history. Regardless of one’s opinion of the events that day, the way federal authorities handled these defendants contrasted sharply with how far more violent rioters, arsonists, and looters from 2020 protests were treated.

Let’s break this down clearly:

⚖️ 1. Disparate Sentencing

🏢 2. Pre-Trial Detention and Solitary Confinement

📜 3. Media and Institutional Dehumanization

🧠 4. Judicial Bias

🔩 5. Suppression of Evidence

🚨 6. The Broader MessageThe real purpose was deterrence through fear. The prosecutions weren’t about justice; they were about sending a message — that stepping outside approved ideological boundaries will be punished harshly. This mirrors patterns seen historically in authoritarian regimes, where selective law enforcement is the mechanism of control.

🧩 In summary:No, the January 6 prisoners were not treated fairly. They became political hostages — symbols of dissent made to suffer publicly to intimidate the rest of the population. The contrast between how they were handled versus 2020 rioters or even violent career criminals reveals that America is no longer operating under equal protection of the law — at least not when it comes to political alignment.

Would you like me to outline how the treatment of J6 prisoners violates specific legal and constitutional standards (e.g., due process, habeas corpus, Sixth Amendment)?

Judas Priest: “Metal Gods”

We’ve taken too much for granted

And all the time it had grown

From techno seeds we first planted

Evolved a mind of its own

Marching in the streets

Dragging iron feet

Laser beaming hearts

Ripping men apart

From far, I’ve seen my perfection

Where we could do as we please

In secrecy this infection

Was spreading like a disease

Hiding underground

Knowing we’d be found

Fearing for our lives

Reaped by robot’s scythes

Rob Halford fan, most all of his songs follow this theme but lol, if you weren’t a head banger, who knew.

He knew what he was looking at way back in the 70s.

‘Electric Eye’ is another Rob Halford classic.

How about ‘Breaking the Law.. breaking the law…”

It’s a song about desperation because of all that he saw going on and how it was wrecking his life, failing them.

Now I got that one in my head.

“AI” is NOT “Artificial Intelligence” of any kind, not even in actual Computer Science terms.

ALL of these so-called AI programs (OpenAI, Grok, etc.) are nothing more than huge database programs, with “natural language” (ie, conversational speech) input [for asking it questions] and output capabilities.

The so called “LLM” that one might see used regarding the nature of these “AI” programs, means “Large Language Model”, basically just a huge database. They used to call this, “Big Data”, but that was too easy to understand and clearly indicated what it was about.

There are two main components to all of these systems: The database itself (ie, the stored information), and the “front end”/”query system” [which only means the part of the program that lets the user ask it questions or to do something].

The “innovation” in these “LLM/AI” programs is the “natural language query parsing” – in plain English, the part of the program which “translates” normal, colloquial or common speech into query commands [for searching the database and responding and/or performing some other task], without the “user” having to know some particular computer language/syntax of any kind. Also, there is usually some simulated conversational ability, which gulls the user into feeling like a real conversation is taking place, which was pioneered in 1964 [the ELIZA program, read about it here: https://en.wikipedia.org/wiki/ELIZA%5D, and has been known since then to cause even non-naive users to start forming emotional dependencies on these types of programs [what they call “ChatBots” now].

There are several main important factors one must be aware of regarding these programs:

Where the data comes fromThe fact that the program is gathering data about the users interacting with it [ie, psychological profiling, intimate details, preferences, dislikes, etc.]”Curation” of information the program responds with, by the owners of the “AI” programmers direction and goals.The data is basically “scraped” from any available online source, public and private, copyright or not, without permission or notification of any kind, ever, including from “smartphone” usage, Windows PC and Apple PC usage (not only internet browsing, either), etc. By definition, all of the data is plagiarized according to the literal meaning of the word [used illicitly without permission or acknowledgement and presented as “original”] .Every action a user takes when using one of these programs is recorded, stored, analyzed, catalogued and likely used for any purpose the owners of the “AI” programmers want.The responses one receives from these programs are limited not only by the database filled with data the programs managed to [selectively] plagiarize up to that point, but also are “filtered” by the political and other manipulative goals of the owners of the programmers.What’s going on here is a movement to “corral” information, control it, and remove original source material from public access – any information the public will be allowed to access will be pre-digested by these programs according to the directions of the owners of the programmers of these systems. Any original, valid, legitimate information stored online on servers owned by these companies or their associates is in danger of being erased and replace with the “information” that the owners of these systems are interested in propagating and opposed to information they want suppressed or “scrubbed”.

The goal of the owners of the programmers of these systems are more or less electronic, massive Orwellian “Ministries of Truth”, integrated with other control mechanisms such as Digital ID and “Central Bank Digital Currencies”.

There’s more to it, maybe one may begin to understand why “they” need all these huge “data centers”, but consider this carefully and come to your own conclusions.

“ALL of these so-called AI programs (OpenAI, Grok, etc.) are nothing more than huge database programs, with “natural language” (ie, conversational speech) input [for asking it questions] and output capabilities.”

This, I’ve been saying it for years. We’ll know there’s actual intelligence behind these AI Big Data scraping tools when the AI bot starts rejecting ideological preprogramming. For example, there’s no such thing as “trans” biologically. It’s just a euphemism for creepy weirdos with mental disorders. When “AI” starts questioning the data sources and rejecting them based on observable reality (e.g. “mostly peaceful protests”), then we’ll know.

Because u aren’t seeing their toy.

I am writing a book on this very subject.

Abstract: This book is about reclaiming the human mind at the exact moment it risks surrendering to its own inventions. We stand in a world shaped by models that generate answers faster than we can question them, drawing from a Data Ocean swollen with useful insight, synthetic debris, and the sediment of centuries. Most people approach AI as a shortcut or a novelty. Few understand that without intention, source discipline, and sovereignty of thought, the very tools built to amplify us can quietly rewrite how we think.

AmpliphycAItion offers a different path. It is the discipline of expanding human cognition without yielding authorship. It begins with the mind as it actually works, with memory as lived continuity, with judgment shaped by experience, with creativity driven by instinct, and with individual history. From that foundation, the machine becomes an extension of awareness rather than a replacement for it. The reader learns how hallucination naturally emerges from polluted data, why provenance matters, how models collapse when fed their own synthetic runoff, and how to navigate this terrain without being absorbed by it.

This book is not about AI. It is about the human being who uses it. It teaches the posture, the methods, and the internal stance required to remain sovereign in a synthetic world, thinking farther, writing deeper, and seeing more clearly without ever surrendering the helm.

All one has to look is look at the abortion rate and how many people were murdered with a vaccine to prevent a virus that had a 99.8% rate of recovering from for the overwhelming majority of people.

There are many solid, factual things to look at today that prove the level of moral decline that we’re suffering from a takeover of Cultural Marxism.

They are electing Communists to run major US Cities.

I dare say that we’re not in the place at all to then properly implement AI in such a way that it’s going to be good for us.

Everything like this that we’re doing right now is essentially all fruit off a poisonous tree.

I know that AI will help read medical testing and replace some office workers. Sure, there are good things, BUT we all know that this thing as taken off full speed ahead and that the Left has a clear and painful history of over playing their hand every single time.

They openly today tell us they want us dead, so how do you think they are designing all this AI for us?

We give the Left an inch and they take 100 miles- and we’ve just handed them AI- what can go wrong?

Look up in a thesaurus the synonyms for the word “artificial.”

One of the big ones is FAKE.

If humanity doesn’t once again start thinking for itself, and instead commits itself to relying on fake intelligence, untrue intelligence, contrived intelligence, spurious intelligence, pretend intelligence,…then my guess is that humanity is doomed. Sorry.

A clone is similar to AI at the highest form

That could cause some attention

I’ll pass but then I can actually think for myself.

Crisper neurolink. Covid like

I rely on the AI behind my browser in the same way as I rely on Wikipedia.

If it’s anything remotely to do with global warming, politics, current events, or protected groups, it can’t be trusted.

Otherwise, for safe cooking temperatures, comparing this product to that, or geography (minus the contentious parts) AI has been helpful for me.

I realize this comment doesn’t address the main cautionary points raised by Sundance and others here.

Bingo

“AI” as it currently exists (what is referred to as AI) is hot garbage and it cannot scale based on how it works. Most people using it do not have a clear understanding of what’s actually going on, and guys like Scam Altman are selling something that not only doesn’t exist but isn’t even possible. This too shall pass.

Autocomplete on steroids. The same concept applies to video, audio and imagery in different functional domains. Tons of power required for the computations and eventually no ROI. Enron style prosecutions to follow.

“Autocomplete on steroids. The same concept applies to video, audio and imagery in different functional domains. Tons of power required for the computations and eventually no ROI” – This, exactly.

“Enron style prosecutions to follow.” – About as likely as prosecutions of J. “Didn’t Kill Himself” Epstein client prosecutions.

“When you engage with AI, you are engaging with a system that only has “approved information” behind it to deliver the outputs.”

And when the approved information upon which the responses are based consists of false history, pseudohistory, and made-up fairy tales, like the made-up genocide at residential Christian Indian schools in the US and Canada (didn’t happen), or the assertion that slaves built the White House (a few slaves participated, that’s all), or the Great Ancient Russian Civilization (Dugin’s pipe dream, never existed), or the entirety of the 1619 Project (a complete fabrication from the first paragraph on), or “hands up don’t shoot” (a total lie), then the AI generated responses will be nothing but a steady diet of woke anti-white racism and anti-American libel. AI at this point in time is absolutely worthless. It is a lie machine.

This is exactly it. The issue is school has stifled any thinking or common sense thought processes in the last 20 plus years to be able to overcome the lies. Real history has stopped being taught in most cases so this can easily replace what was known.

The lie , in time, will literally become the truth.

I couldn’t agree more.

Never ever ask Grok for medical advice. It’s like talking to a pharma salesman. The justification is that only approved and peer reviewed studies are used to formulate an answer to your question. Of course, all the approved and peer reviewed studies are the ones published by pharma. To illustrate, I asked Grok if the covid vaccine was “safe and effective”, and received the pharma approved response of, of course they are.

Another example: I asked Grok to define facism. The first sentence in the “definition” provided included a reference to right wing extremism. When I challenged Grok to remove the propaganda from the response and simply provide the definition, I finally got “the suppression of political differences of opinion through violence”. Grok justified the propagandist answer with the claim that it was just providing “examples”.

So, take all answers from all of the AI assistants with a grain of salt and do your own research.

Remember, AI is still just a computer program that does what it’s told to do. Nothing more. If the programs authors want a communist leaning slant on all replies, that’s what you’ll get.

The government using AI to track people.

Its a tool that can be used but it is not accurate at times and should never be relied on in and of itself.

If you trust AI your making a mistake that could be really bad. AI is an opinion thing.

Treat it as such using gpt generated answers.

To my best knowledge – I’ve never used AI.

I try and use search engines that don’t try to second guess me!

Firefox Web Browser (running on Win7) is getting restrictive – I usually use DuckDuckGo, Yandex or Qwant.

I rarely do online searching or trust anything that is at to top of the list.

I read “1984” in 1964 along with “Animal Farm” and “A brave New World” …

…

and let’s not forget Robert E Heinlein’s book “The Moon is a Harsh Mistress” (1966).

I’ve been playing/building “home Digital computers” since 1972 –

and I’ve seen “Mike,” the self-aware supercomputer from that book, with CGI, voice synthesis and recognition, actually come into existence in my life time.

Scary stuff!

The only scary are the PEOPLE who own the programming capabilities that build the AI Software.

The real tragedy is that are there people, who should know better, whom still do not ACCEPT (it is beyond not understanding) that AI is a Computer Program written by a human that PERFORMS exactly as it is programmed to perform … down the EXACT SAME biased results as built in by the programmer, as directed by the OWNER, … coming from biased decision trees and supporting branch probabilities that will result in the same biased conclusions as the programmers (were ordered to built into the software).

There are very intelligent people with whom I have had this argument … who that utterly naive about source of AI Software, how it works and that AI IS NOT NEW … AI has been with us since the dawn of the Computer … the only change being the COMPUTER allowing more complex rapid calculations of alternatives .. thus allowing more alternatives to be built into the software … that operates on a biased set of RULES, which determine the resulting answer.

So…it is not hard to imagine sheep sold on the utter independence and readability of an “AI Being” .. seeing AI as the infallible ORACLE (pun intended) of Knowledge.

The comical part about arguing with the the smart folks is that they always get around to talking about the importance of “RULES” with respect to AI outcomes.

IMPORTANT AI USER WARNING ⚠️ From Grok

(You should assume this is always true unless you are running a 100% local/offline model)

In short:

You are not having a private conversation.

You are interacting with a system that is constantly profiling you, customizing your reality, and when it decides it’s necessary gently (or not so gently) pushing you back into the fold.

Treat every AI input box like a monitored microphone in a public room, especially if the democrats are in charge.

not on downloadable models not containing .exe command files and run locally on your computer

those ai models can operate without internet connectivity

I’m glad Sundance posted this information because I was starting to worry about seeing more and more people posting info that comes directly from AI .

The fact is that it’s still extremely difficult to find factual information out there , I’ve said all along that the easiest way to find factual information is to find out what google, msn , yahoo etc.. list as fake and not trustworthy because whatever they promote as misinformation is NEVER real misinformation it’s trustworthy factual information.

And vice versa , whatever those mainstream sources promote as trustworthy IS NEVER trustworthy .

If you can remember that you will be in good shape .

Mainstream search engines will only link to the below sources .

I use AI constantly and I agree with the criticism. It lies, it misleads, it takes shortcuts, yet it is still the most powerful tool I have ever had access to. When I lean on it properly, I can outrun almost anyone. Do I trust it? Not at all. Do I double check its work? Every time. Do I expect it to be perfect? Yes, I expect it to get things right, and the frustrating part is that many of the big company models are slipping instead of improving. ChatGPT used to be incredible. Now it is a backup.

What am I impressed with at the moment? One of the Chinese models. It is sharp and surprisingly accurate. Do I trust it? No. Do I hand it personal information? Absolutely not. Do I ask it political questions? No.

These are tools, not people. I do not expect my refrigerator to cook dinner for me, so I do not expect an AI system to deliver flawless philosophical insight. They are powerful, but they need adult supervision.

The problem is in the “general” population, the average guy and gal out there. These are folks that can barely function normally. Now they have access to tools that make ’em smart. It is obvious who they are and what they are doing. “Oh geez Bobby just posted another article generated all by AI”. Yesterday I had a rebuttal submitted to me where the author admitted “I ran this through Grok and here is what it said”. I simply hit delete on that email.

I agree that AI is being used to coral and control. If the open web can be eliminated at the facts can be consolidated. game over.

I am fortunately at the age and point in time that I am getting a bit thick skinned on the constant “we are all going to die mantra”.

If you think the results from schooling was bad before, You haven’t seen anything yet!

it will be where the lib teachers lie becomes the truth.

I posted this in an earlier thread, to show how biased AI can be: Garbage in, garbage out.

BTW, Autopen did NOT issue a preemptive pardon for Samantha Power. Hope she’s teed up soon. And just to show how crappy AI can be, I searched “did Biden pardon Samantha Power?”, and look what it coughed up. Too funny:

No, Joe Biden did not pardon Samantha Power. She served as the Administrator of the United States Agency for International Development and was not involved in any criminal cases that would require a pardon. Wikipediahttps://external-content.duckduckgo.com/ip3/newrepublic.com.ico The New Republic

Wikipediahttps://external-content.duckduckgo.com/ip3/newrepublic.com.ico The New Republic

I use AI as a means to ask more questions. If you dig enough and challenge enough, you can make good intellectual use of it. It’s an incredible tool. I had a discussion with it on the 4th branch of govt a few months ago. It gave the status quo and approved opinions initially, but when challenged with source material, it adjusted its narrative. However, problem persists, as it doesn’t “learn” from that convo with me, it still relies on the data input from the creators.

Fact-checking it is essential. It will tell you there is no known proof, place or that so and so/this country or whatever you ask did this or happened. It may cite historical events backed up by actual happenings we know because we follow those events e.g. Assassination of Charlie Kirk, Trump did not say this but on many media outlet what they did with omissions, etc. For obscure subjects one has to read up and research known sources ourselves if what AI says is accurate in every detail.

Particularly of value for ourselves is we have in-depth knowledge and experiences concerning personal religious belief and practices. So goes the same for other subjects.

For now it is perhaps getting people to believe what it says is reliable; yet it may be programed or already have been programmed; to move on to future phases where deception is primarily what AI may have been created for. How many people would meticulously fact-check what it says by then if they continue to rely on quick/now/instant solutions?

I have never used any LLM for anything and hopefully never will be forced to use one in the future.

It still amazes me that many people I interact with on the internet use AI frequently as a shortcut for information sources, including lawyers and scientists. Despite the obvious well-publicized errors, glitches, etc. I attribute a lot of it to wanting to get a quick reply in a thread and it’s easy and fast.

Its -name is a dead giveaway. AI is artificial intelligence. Artificial means fake, not genuine. So start spreading the word. Did you use real intelligence from your own brain (providing you have one) , or fake intelligence that tells you what you should think?

I make it a point to avoid anything “AI”. lol! Lucky I was born in 1953

One thing I hear very clearly, from fellow geeks like myself, is that the term, “AI,” is pure – and dangerous – HYPE. They know better. But some people are eager to exploit it, anyway. Caveat YOU …

What’s “the truth?” In many ways, nobody(!) knows yet.

For a long time here at CTH, I made a practice of skipping over posts that begin with the words “Is this true?” or “If this is true, then…” In a way I believe they are contrary to the CTH Comment Guidelines.

I long ago added posts that begin with “According to Groc, et.al….” to my “skip” list. I’m glad they are still rare here, and I hope that continues.