I am very interested in this, in part due to what Suchir Balaji was working on, and in part due to what many people are now starting to recognize as the most valuable asset in the world of AI creation, MASS DATA COLLECTION.

Tucker Carlson sits down with Poornima Ramarao, the mother of Suchir Balaji, who was an AI engineer working with Sam Altman at OpenAI, and quite likely murdered for his concern about how the mass data was being captured.

The effectiveness of the AI construct is dependent on large scale mass data collection to frame the underlying information network the AI can review. The larger the data capture, the more accurate, effective and valuable the AI outcome is. More inputs equal more reviewable data, equal a more comprehensive outcome; that’s the value. Suchir Balaji became concerned that OpenAI was capturing “copyrighted” material as part of the input data flow. Suchir Balaji soon died under mysterious circumstances. [¹I’ll explain my concern after the video]

Chapters:

0:00 Suchir Balaji’s Career and Alleged Suicide

9:48 The Threat Balaji Posed to OpenAI

13:03 Was This Really a Suicide?

21:59 Evidence of Foul Play

35:27 The Authorities Response

38:47 Was Sam Altman Involved in the Death of Balaji?

46:55 Will Donald Trump Investigate Balaji’s Death?

48:57 AI Safety and Sam Altman’s Firing

55:01 Tucker Demands Action From Congressman Ro Khanna

59:23 Why Hasn’t Any Network Covered This Story?

1:01:00 More Whistleblowers Are Being Killed

¹If you understand the competitive nature of this emerging tech business, specifically as it relates to the multi-billion dollars invested in the AI race to create the best product, then you frame the construct of what is most valuable within the industry.

¹If you understand the competitive nature of this emerging tech business, specifically as it relates to the multi-billion dollars invested in the AI race to create the best product, then you frame the construct of what is most valuable within the industry.

The more comprehensive and massive the database is, the larger the mass data collection baseline is, the more valuable the AI product is when it is deployed. The AI here is essentially advanced search algorithms that allow the user to ask questions.

The AI does the search, the library that supports the outcome is what creates the value of the end product, the result to the question.

As a consequence, the library or database holding the previously assembled data, is worth hundreds-of-billions. The AI return result is more accurate with more data to review.

All of the thinking, analysis, mental labor and analysis on every subject in the world being fed into a massive data collection system, creates incredibly accurate AI results. However, much of that data is proprietary, copyrighted and exclusively owned by the person who created it, or institution that commissioned the work.

Suchir Balaji was in charge of scooping up data, enmeshing it in the OpenAI library and noticed that some of the captured information was proprietary, copyrighted. Balaji raised alarms and eventually became a whistleblower, because he saw OpenAI was now capturing the exclusive mental work product of others and then adding it to their AI system to create even more value.

Suchir Balaji was in charge of scooping up data, enmeshing it in the OpenAI library and noticed that some of the captured information was proprietary, copyrighted. Balaji raised alarms and eventually became a whistleblower, because he saw OpenAI was now capturing the exclusive mental work product of others and then adding it to their AI system to create even more value.

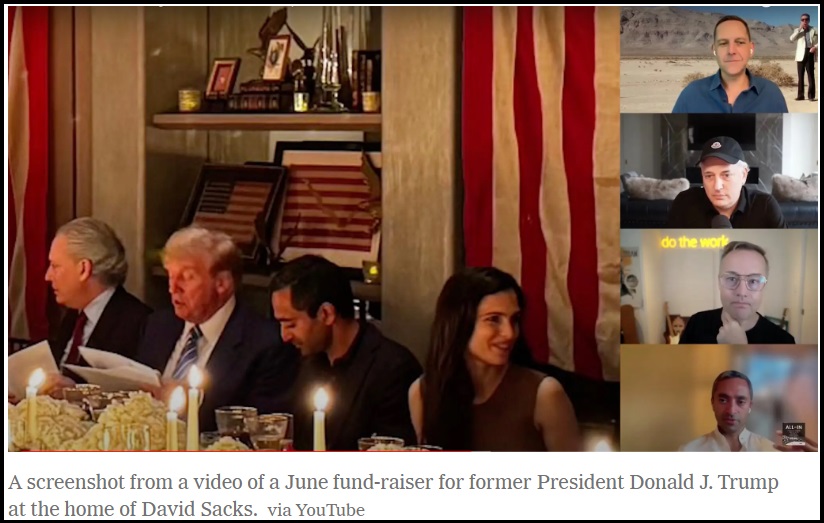

I hope that context makes sense, because it’s the baseline for my concern with Larry Ellison, Elon Musk, Peter Thiel, Vivek Ramaswamy, David Sacks and the tech bros who are also part of this advanced autonomous AI competition. There are trillions at stake.

Is DOGE really about government efficiency?… Or…

Is it possible that DOGE is just a false front while simultaneously carrying a larger, unspoken goal of accessing the largest data collection system in the world, the NSA database containing the full spectrum metadata of every American and every electronic communication intercept on earth.

AI is valued based on mass data collection to create the library for the software to access. Is there a larger data collection system than the United States Government? The answer is no.

AI running on the backbone of the full NSA-level data collection, would be the most valuable AI in the world.

Think about it.

What are the real motives and intentions of the Tech Bros here in MAGA world, and the billionaires behind them?

San Francisco’s KQED story on the death.

New Mayor has tapped an OpenAI Founder for his transition team.

https://www.kqed.org/news/12020909/openai-whistleblowers-death-sf-parents-skeptical-citys-investigation

The family is planning a vigil in front of the San Francisco medical examiner’s office on Jan. 19 at 11 a.m.

Maybe I will go.^^

Don’t take your tracking device with you

Elon Musk just retweeted this video. He is threatening with this too

He is not showing compassion or that he thinks its suspicious. He is sending a message, even though OpenAI is 100% behind this and he had nothing to do with hit. He is saying “I will do this too”

He’s also hoping this sinks OpenAI as he hates Altman

To be fair Altman is a tool. If his sister’s claims are to be believed, a pervert as well. I know, she’s “mentally ill” and therefore we can all discredit her and listen to her family as they shower their golden child Sam with praise.

“mental illness” can certainly be attributed to sexual and physical assault, especially when the perpetrator is powerful and seemingly “untouchable”.

It doesn’t have to be threatening to be advantageous to Musk. If Open AI is out of the way, Musk gains exponentially.

Sundance, last night, I too watched this interview. Although you are correct in the OpenAI business intention to make use of, as you wrote, “All of the thinking, analysis, mental labor and analysis on every subject in the world…” to output “…incredibly accurate AI results” omits ONE POINT OF HUGELY IMPORTANT RELEVANCE spoken by Suchir’s Mother, Poornima. She told Tucker that Suchir had revealed to her that in the OpenAI processing of raw (captured/scooped up) information, the OpenAI systems are coding that incoming “raw information” in digit value of .5 in, .5 saved, .5 out. She clarified her meaning to Tucker—historical and still normal coding uses digit value: 1 in, 1 saved, 1 out (i.e., ones and zeros)—meaning that the accuracy of what is being scooped up is not being collected and stored within OpenAI Libraries with identical accuracy. Instead, the captured raw information is being distorted away from its essential truth in being. HORROR OR HORRORS, the un-believability of news, information, any communication transfer whatsoever, will continue apace. Governing authorities of any institution whatsoever, will procecute at will based on falsity; and so will judgment and enforcement authorities proceed on false information.

“Suchir Balaji was in charge of scooping up data, enmeshing it in the OpenAI library and noticed that some of the captured information was proprietary, copyrighted. Balaji raised alarms and eventually became a whistleblower, because he saw OpenAI was now capturing the exclusive mental work product of others and then adding it to their AI system to create even more value.”

The main impetus of concern was that OpenAI was originally a non-profit brain child. There was less concern about scooping up copywrited material for a non-profit company, then when the company suddenly turned and became for profit, thus profiting on the intellectual material of other holders of said copywrites.

This was also a breaking point between Elon Musk and Sam Altman, as Elon was originally in the OpenAI launch, but left it when he disagreed about the for profit direction it was going. Elon talks about this in many multi-hour podcasts for his concern over AI and he himself believes that AI can be used for good, but it can also be weaponized and he wants government oversight over its development and use.

This poor woman said Sam Altman was a liar – Elon Musk has said the same in podcast discussions.

https://www.theverge.com/2024/11/18/24299787/elon-musk-openai-lawsuit-sam-altman-xai-google-deepmind

Seems to me the question of using so-called “copyrighted” data for training data is open. Not sure copyright, conceived as a government monopoly given to publishers for “limited times” should be extended to this.

It’s like saying if you read book and then write something using ideas from the book you are “infringing”. And of course, under current copyright law “limited times” means essentially forever.

Who said it was being used for “training” data?

Intellectual property fed into the system can be used for R&D without giving credit where credit is due and in essence can lose the entitlement to such works through others efforts.

This young man was worried about the ethics behind people losing intellectual property for nefarious gains… and we ALL SHOULD BE WORRIED over that.

Google’s original mission was to “organize the world’s information and make it universally accessible and useful.” Their founding motto was “Don’t be evil.”

Facebook’s mission statement was to “give people the power to build community and bring the world closer together.”

Tech companies start out idealistic and end up monopolistic.

Bob Metcalfe a pioneer of computer networks and the inventor of Ethernet formulated Metcalfe’s Law. It states that the value of a network is proportional to the square of the number of connected users or devices in the network. In other words, more connected nodes make the network exponentially more valuable.

For AI, the value of the system seems to be proportional to the Nth power of the training data-sets fed into it. (N is probably greater than three.) Huge value added by expanding the data-sets.

Government and corporations do a very poor job of keeping people’s private information private. How does our society function if none of our data is private? Especially since in this data age, our private data is constantly used to provide the ability to transact in the real world. When our transactions were based on verbal and written data not digitized, few people other than the owner of the data had access to enough personal data to steal anything let alone real estate. Cash is looking more and more friendly but very inconvenient.