A remarkable conflict has revealed itself amid the use of Artificial Intelligence (AI) software, the United States Government (USG), the Dept of War (DoW) and the AI software company Anthropic.

At the core of the issue is the USG contracting with Anthropic for the use of their Claude AI system for use in military operations. The Dept of War has a contract with Anthropic to use their software in combination with various military and weapon use systems. However, Anthropic is putting restrictions on the military application of their AI.

Anthropic says the AI cannot be used for defense dept autonomous weapons that do not utilize human triggering. Additionally, Anthropic is saying their system cannot be used to surveil U.S. citizens. Anthropic engineers would be the decisionmakers on the government use.

Anthropic says the AI cannot be used for defense dept autonomous weapons that do not utilize human triggering. Additionally, Anthropic is saying their system cannot be used to surveil U.S. citizens. Anthropic engineers would be the decisionmakers on the government use.

The Trump administration has rejected the demand of Anthropic, saying they will not permit a Silicon Valley group of engineers to determine deployment of U.S. applications, thereby replacing the decision-making of elected officials, military commanders, the Joint Chiefs’ of staff, and ultimately the President of the United States and even military servicemembers who are facing life or death decisions.

It is a key moment for the use of AI as it applies to government application and private sector.

♦ After several weeks of conflict between U.S. government officials and the CEO of Anthropic, Dario Amodei, President Trump finally said enough and told all agencies of government to stop using Anthropic products.

President Trump via Truth Social: “The Leftwing nut jobs at Anthropic have made a DISASTROUS MISTAKE trying to STRONG-ARM the Department of War, and force them to obey their Terms of Service instead of our Constitution. Their selfishness is putting AMERICAN LIVES at risk, our Troops in danger, and our National Security in JEOPARDY.

Therefore, I am directing EVERY Federal Agency in the United States Government to IMMEDIATELY CEASE all use of Anthropic’s technology. We don’t need it, we don’t want it, and will not do business with them again! There will be a Six Month phase out period for Agencies like the Department of War who are using Anthropic’s products, at various levels. Anthropic better get their act together, and be helpful during this phase out period, or I will use the Full Power of the Presidency to make them comply, with major civil and criminal consequences to follow.

WE will decide the fate of our Country — NOT some out-of-control, Radical Left AI company run by people who have no idea what the real World is all about. Thank you for your attention to this matter. MAKE AMERICA GREAT AGAIN!”

♦ Secretary of War Pete Hegseth then responded via X:

“This week, Anthropic delivered a master class in arrogance and betrayal as well as a textbook case of how not to do business with the United States Government or the Pentagon.

“This week, Anthropic delivered a master class in arrogance and betrayal as well as a textbook case of how not to do business with the United States Government or the Pentagon.

Our position has never wavered and will never waver: the Department of War must have full, unrestricted access to Anthropic’s models for every LAWFUL purpose in defense of the Republic.

Instead, AnthropicAI and its CEO Dario Amodei, have chosen duplicity. Cloaked in the sanctimonious rhetoric of “effective altruism,” they have attempted to strong-arm the United States military into submission – a cowardly act of corporate virtue-signaling that places Silicon Valley ideology above American lives.

The Terms of Service of Anthropic’s defective altruism will never outweigh the safety, the readiness, or the lives of American troops on the battlefield.

Their true objective is unmistakable: to seize veto power over the operational decisions of the United States military. That is unacceptable.

As President Trump stated on Truth Social, the Commander-in-Chief and the American people alone will determine the destiny of our armed forces, not unelected tech executives.

Anthropic’s stance is fundamentally incompatible with American principles. Their relationship with the United States Armed Forces and the Federal Government has therefore been permanently altered.

In conjunction with the President’s directive for the Federal Government to cease all use of Anthropic’s technology, I am directing the Department of War to designate Anthropic a Supply-Chain Risk to National Security. Effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic. Anthropic will continue to provide the Department of War its services for a period of no more than six months to allow for a seamless transition to a better and more patriotic service.

America’s warfighters will never be held hostage by the ideological whims of Big Tech. This decision is final.”

♦ Anthropic then responded:

“Earlier today, Secretary of War Pete Hegseth shared on X that he is directing the Department of War to designate Anthropic a supply chain risk. This action follows months of negotiations that reached an impasse over two exceptions we requested to the lawful use of our AI model, Claude: the mass domestic surveillance of Americans and fully autonomous weapons.

We have not yet received direct communication from the Department of War or the White House on the status of our negotiations.

We have tried in good faith to reach an agreement with the Department of War, making clear that we support all lawful uses of AI for national security aside from the two narrow exceptions above. To the best of our knowledge, these exceptions have not affected a single government mission to date.

We held to our exceptions for two reasons. First, we do not believe that today’s frontier AI models are reliable enough to be used in fully autonomous weapons. Allowing current models to be used in this way would endanger America’s warfighters and civilians. Second, we believe that mass domestic surveillance of Americans constitutes a violation of fundamental rights.

Designating Anthropic as a supply chain risk would be an unprecedented action—one historically reserved for US adversaries, never before publicly applied to an American company. We are deeply saddened by these developments. As the first frontier AI company to deploy models in the US government’s classified networks, Anthropic has supported American warfighters since June 2024 and has every intention of continuing to do so.

We believe this designation would both be legally unsound and set a dangerous precedent for any American company that negotiates with the government.

No amount of intimidation or punishment from the Department of War will change our position on mass domestic surveillance or fully autonomous weapons. We will challenge any supply chain risk designation in court.” (source)

There are multiple alternative companies rapidly developing various AI models that could be used to replace the Claude system within the Dept of War. In fact, the DoW is likely to partner with Open AI as a replacement for the Anthropic contract.

However, this conflict about use is one that has not only erupted within Anthropic but has also surfaced within Palantir AI. Palantir CEO Alex Karp highlighted the issue in his own discussions about the Pentagon vs. Anthropic standoff.

“The core issue is who decides,” Karp says. The issue is not whether the use of the government use of AI is right; the issue is not whether you agree with the mission of the Dept of War. The real issue surrounds who will decide its use.

Karp notes, “It’s commonly known that our software is used in operational context at war.” “Do you really think the warfighter is going to trust a software company that pulls the plug because something becomes controversial?” Let that sit for a second. “Currently, when you’re a warfighter, your life depends on your software.” A group of tech engineers in Silicon Valley does not get to replace the decisions of the elected experts in national security and the commander in chief.

Palantir CEO Alex Karp told you the most important thing about the Pentagon vs. Anthropic standoff.

And nobody is connecting the dots.

Watch and save this clip, then read this thread.

Karp said something most tech CEOs would never say out loud:

“A small island in Silicon… https://t.co/jlcqnWCHEn pic.twitter.com/uNXQIGg7kF

— Milk Road AI (@MilkRoadAI) February 27, 2026

Treason, by any other name…

I’m going to mis Anthropic.

But Wordman won’t.

I’d have to say that the 4th amendment violations by USG are somewhat more treasonous.

That is between We The People & the USG. If we allow our elected representatives to betray the Constitution that’s on us.

In this instance, do we want a private entity that nobody voted for to insert itself into the decision making process?

Mass surveillance is coming. It’s not if it’s when.

It’s already here.

Chalk up another victory for the Tech Bros as we now have the entire Trump administration and Uniparty demanding domestic surveillance.

Prepare accordingly.

When happened in late 2001. The evolution has continued at a rapid pace & why wouldn’t it?

We let them get away with it.

It’s not on me. I didn’t vote for it, and never agreed.

No definitely not But I would not want to even think about a rogue government being able to not only surveille us but also order a drone strike on a US community just because the people won’t comply with whatever nonsense they are spewing. Think about that

Everyday AI looks to me more and more like the “Beast” in Revelations.

the abomination that makes desolate.

Dario Amodei

Says it all.

Yep, quintessentially the dweeb of leftist intention, wouldn’t trust him …

Yes it does. F’ him and all WEF stooges, including those employed in the US GOV in any capacity, including members of the House of “Representatives”

So? A 1/4 of the Trump Administration was at latest WEF confab.

I keep getting surprised at the number of leftist Europeans and Indians that are CEOs of our companies. Another reason to get rid of H1B visas.

Un-assimilated Indian Hindus are just as dangerous as un-assimilated Islamists.

Anyone unassimilated, even Snow Mexicans

Derp ..

Couldn’t happen to a more deserving dolt.

“Anthropic says the AI cannot be used for defense dept autonomous weapons that do not utilize human triggering. Additionally, Anthropic is saying their system cannot be used to surveil U.S. citizens.”

… shouldn’t we be IN FAVOR of those things?

Not with a bunch of Silicon Valley woke boys and DEI graduates defining what is “autonomous,” what is “surveillance” and who falls under the protected category of a “U.S. citizen.”

No. We should not be in favor of that approach.

agreed i dont want tech bros making the call.

has “our” government committed to the same?

That, at least, is theoretically within the control of the American people at large

Did you read President Trump’s Truth posted above?

“WE will decide the fate of our Country — NOT some out-of-control, Radical Left AI company run by people who have no idea what the real World is all about.

Thank you for your attention to this matter.

MAKE AMERICA GREAT AGAIN!””

And Secretary Hegseth:

“As President Trump stated on Truth Social, the Commander-in-Chief and the American people alone will determine the destiny of our armed forces, not unelected tech executives.”

Amen Sundance – as Bill Clinton declared years ago , “Depends on what the meaning of is, Is!” – Soy boys, Karen’s and They/thems should NEVER be in charge!!!

US citizen and surveillance are two terms not open to debate. Autonomous? Ok.

Doesn’t this lead back to the FISA court??

Or a Minority Report dystopian future…

That is a legitimate position. However, we have already seen the US Government (DOJ, FBI, FISA) is incapable to make these distinctions as well.

No.

With an exclamation point.

We, elected a President and he will decide .

President Trump loves America.

Silicone Valley loves themselves.

For that reason alone, I side with our President.

I also would not woke anyone taking control or being able to assist a tyrannical administration Ie: Biden , Obama

who turned the government against Patriotic MAGA americans.

Congress must put laws in place to protect americans from AI misuse (surveillance – autonomous weapons et al) … make it an act of treason with the death penalty as the only course of action against anyone using AI against the American people.

Congress cannot put together and pass a budget. A basic task. Don’t count on Cobgress for anything.

True today, but we have a chance in midterms to replace a lot of seats.

so never say never… just not today.

Congress is All

on-board with mass surveillance. To Protect Them, from Us.

Good luck with that.

Laws in place to protect the American citizens by Congress!?

Those days are gone.

The intelligence agencies would be simultaneously working for ways around those laws just look at all the illegal surveillance of President Trump!

If you want privacy it’s up to YOU to figure out attaining it, this has been made clear by Sundance for years.

Good for President Trump!

AI created this technology, but they have no right to decide how it will be used.

Money talks so another AI will provide the same technology without the constraints.

Of course AI is full of of moral arguments, but the AI companies have no seat at the table, and need to understand that.

If they are so concerned they should have not invented these AI weapons.

Think about Oppenheimer he was haunted by what he helped create if history is accurate.

Thank you for clarifying. I am not a tech person and was a little bit unsure on this subject.

Yes–they routinely refer to foreign nationals as “citizens”, for example.

Our “neighbors” is the current shtick.

I wondered the same thing; the questions are : who defines, and, what are the definitions.

TY, for the clarification as to the former.

By definition of coding for AI autonomous decisions, some human, whether Silicon Valley or government, will have to decide the parameters that define autonomous by either placing the rules directly in the code (Silicon Valley) or some permissions file the program reads (Silicon Valley or the end user of the program.

The problem occurs when actually giving permissions to an AI program to act autonomously without the extra step of human verification.

The AI program, like any program, will follow its coding. It doesn’t think. For example, this past December part Amazon Web Services was out for 13 hours due to AI doing what it was told to do.

What happened with the AWS outage was the program was granted high level permissions to autonomously fix any problems it encountered the best way it saw fit. In December, the fix it decided was the best course was to take for a given problem was to completely delete a portion of the production environment and rebuild it resulting in a 13 hour production outage.

I’d hate to see that happen with autonomous permissions granted to a weapons system to either take it off line or self launch. Some form of safety check needs to be implemented.

So in this case I agree that some from “human triggering” requirement is not an unreasonable request.

This issue is not the triggering. It’s who decides what and when. Two separate issues.

Nope, not when it comes to the code. Regardless of who decides what and when, if they grant that authority to the program there still needs to be a fail safe to stop it from implementing an unforeseen circumstance. The Amazon outage is a prime example of unintended consequences.

It’s also the reason there are so many checks and balances for launching our nuclear missiles.

No computer code is perfect so we would never grant the weapons systems autonomous authority to launch.

My comment concerns policy. You comment concerns nuts and bolts (how to execute). I am not arguing with your execution concerns because I’m not speaking to that.

Policy is that the blue-haried idiots in S. Valley don’t get to decide anything. They produce a product and someone else decides if they want that product. In this case, Anthropic made decisions about the product they sell and the government said no dice, we don’t want it.

That is not for the AI company to decide.

Concur. But I wonder whether there’s more to Amodei’s stance than just reflexive virtue signaling, something akin to a deep-seated leftist paranoia about autonomous systems being unleashed specifically against them and their kind.

Sundance, thanks so much for making sense of this Anthropic issue. I wanted to understand the basics, but it was confusing to me — appreciate the clarification. Reminds me of the old 1964 Cold War thriller, Failsafe…

It confused me also. Their wording is designed to do that. Thank you Sundance. You always boil it down to common sense.

On the traces of what Sundance says, there is a high probability that the Woke Boys would consider

ANY surveillance of antifa thugs anti-constitutional

and any uber-intrusive, abusive, overwhelming surveillance of patriot citizens justified.

Hmm. Big Tech Bros, woke DEI boys defining what is surveillance and who falls under protected categories….or…Mark Milley, Jake Sullivan, Lloyd Austin, James Boasberg, John Roberts, Samantha Power, Victoria Nuland, et al?

Hmm…would I like to eat a bullet…or…drink the poison.

No thanks.

Much much better response.

Surveillance on conservatives-good.

On leftists and liberals-bad.

Initially, I supported Anthropic – yes, we should be in favor of those things.

Then Sundance made sense with, ” Not with a bunch of Silicon Valley woke boys and DEI graduates defining what is “autonomous,” what is “surveillance” and who falls under the protected category of a “U.S. citizen.” ”

It reminds me of the Patriot Act – used to fight ‘ terrorism ‘ but then Obama/Holder/Biden redefined who is a ‘terrorist’.

It’s the definitions that matter, and who makes those definitions.

Think, ‘ Mums for Liberty’, J6ers, and patriotic truckers being defined as terrorists, and the importance of definitions becomes clear.

Thanks again, Sundance.

Next, the issue becomes, but can we trust the goverment?

That mirrored my thinking process exactly.

This is why I love this site.

He would be A-ok if a Dem was in charge. It is personal with this pasty faced anti American’

Do you think this government, in bed with most of the rest of Big Tech and continuing with well-known FISA violations, should be trusted with doing so instead? And don’t you think that autonomous is already defined, as is surveillance? I thought the truth had no agenda, SD, no matter who verbalised it. But now we’re taking Cliff Notes from Palantir?

You sound like you know a few of these types, too.

(and you’re not wrong)

Continued BLESSINGS of Discernment, Strength, Wisdom, Endurance, and Protection from The LORD GOD ALMIGHTY!

AMEN, AMEN, & AMEN

I couldn’t have said it better SD. I really don’t trust anyone, but I have to completely remove a woke corporation from the equation entirely.

Exactly, Sundance. Who decides is a source of almost infinite power and their demand uncouples those decisions from any accountability and consequences. The latest attempt at a bloodless coup via Silly Valley useful idiots.

Of those things, yes. The decisionmakers, however, should be elected officials following the law. The goals are just, but how best do you achieve accountability? That’s the conundrum. And we’ve seen a chronic lack of accountability of government officials in the past couple or more decades. Which makes this a much more complex issue. But the President and the DoW must uphold their Constitutional authority. It’s for others to strive towards accountability.

exactly. if the government had committed to do the same independently, this would be a non-issue. and if the exact same thing had been announced during the biden regime, we would be saying exactly the opposite.

just because trump is supposedly ‘In charge” does not make me trust the leviathan any more than the previous 30 years.

Good point being made here. At the very least we need to set the framework for accountability to the constitution not Corporations.

Agree about government currently being unaccountable but if we can restore voter integrity, this is our best chance.

Seems like we have seen how this movie ends…Skynet anyone?

I agree that no one should be able to tell the President or anyone else on down the chain of command how to fight a war.

I also agree that AI should NEVER…under any circumstances…be used ungated in direct control of any weaponry….or mass surveillance of US citizens.

Two NARROWLY tailored tailored exceptions seem to thread the needle…no direct weapons control…no mass surveillance of US citizens.

I think the more troubling aspect is that…the US Government…under our President Trump…and Sec. Def. Pete Hegseth…WANT the freedom and ability to do such things with AI.

Even if we trust our current leadership with such dangerous tools…and here is a hint…we shouldn’t…then it only follows we shouldn’t trust the next 0bama with these tools.

Which means that it needs to be a matter of policy until it is a matter of law that such tools cannot be used in such fashion by ANYONE at any time.

Correct. After PDJT is PJDV/Palantir? Worrying.

Well said.

This is akin to Lockheed Martin that says we’ll manufacture and sell you a fighter jet but with caveat we (Lockheed) will tell you where, when and how you can fly it and dictate what enemies the jet can or cannot engage. Answer is rightfully no thank you we’ll take our business elsewhere and also note it is not even constitutional for the Commander and Chief to even accept some terms. By the oath of his office Anthropic had to be given the middle finger.

Yes but a company shouldn’t have veto power over elected government. Citizens need to become more knowledgeable and engaged in the political process so that they elect better officials. This should be taught more in schools especially history of failed governments, critical thinking, debating, ethics, etc. Citizens need a strong uncorrupted justice system. Many laws could be changed to make it harder for corruption to exist. There needs to more accountability for elected officials. America and the world are all experiencing a crisis point of the war between nationalism and globalism.

I agree with you…

yes, Muley, believe we should be in favor

anthropic is drawing a line at mass, out of control civilian surveillance and non-human, a.i. controlled weapon systems.

dario is loathe to leaving target identification and mission execution totally up to algorithms as the final arbiter sans any human involvement.

he’s making his call on what i believe to be purely moral grounds and at great cost, perhaps even an existential cost, to the highly successful engine he has created

so easily he could capitulate and become a multi-billionaire as his decision not to aid and abet mass civilian surveillance and automated weapon systems may cost him everything

i simply don’t see the woke angle he’s being bludgeoned with, am i missing something?

oh well, it may all soon be moot if the newest engine soon to be released by deepseek lives up to the hype, as it could possibly leapfrog anthropic’s opus4.6, and, lo and behold, it’s a free and an open source llm

developments in the a.i. space are moving at lightspeed

America’s competitor countries and its enemies must be licking their lips in anticipation of receiving detailed info about every national security action taken – the absurdity.

Karp is a very bad man. I’m not sure what else to say about this.

The Naivete in some of these posts is stunning. I guess some people want USA to be weaker than our enemies who have no conscience

I don’t know enough to have a completely formed opinion. Loosely following to date. Kind of supporting Anthropic on this. Palantir et al are extremely worrying.

As Palantir use Anthropic supplied software, shouldn’t you be concerned about Anthropic too?

Fantastic to see that a leader of a tech company has a conscience. No surprise that our friend in Palantir sees it differently.

Palantir now has to stop using Anthropic or lose their govt contracts.

AI (I call it SI – “SIMULATED intelligence”) is nothing more than enhanced computing and the basic rule still applies:

GARBAGE IN ——> GARBAGE OUT

Personally, I will NEVER surrender my God-given gift of human intelligence to decisions made by some woke geek based on their beliefs.

We may not have a choice….

And THAT is the real danger…..

We will always have a choice.

And it may be to die on exactly this hill.

Thank you. That GI-GO pattern woke me up in middle of the night. With the propensity of definitions may vary being tossed about, who decides, ?

It’s not even “intellingence.” It only is as intellingent as the person who put in the code….IQ matters..

“The issue is not whether the use of the government use of AI is right; the issue is not whether you agree with the mission of the Dept of War. The real issue surrounds who will decide its use.”

Like Palin’s “death panels.” Who gets to decide?

bottom line…

There is a reason trigger guards exist….

And have for hundreds of years….

We know the safeguards of firearms….

What are the safeguards of AI?

We live in dangerous times….

How can anyone trust a computer? A computer does not understand “chain of command” “loyalty to our country and those in charge of running it” I have been skeptical of AI from the moment I first heard about it. We have already put too much faith in big tech.

Uncharted waters fraught with dangerous and existential possibilities with as yet truly untested AI technology.

Given that the cancerous DS itself remains entrenched and its operatives rogue, the question it seems to me is who watches the watchmen after President Trump departs office.

Unanswerable.

Kind of a fun(?) intellectual argument with out the anger from the other top story of the day. Cheers.

Oh man!!!!!.😎👍🏻

….I needed a breather.

ANTHROPIC..HAL

Or ‘smartmatic’ or ‘dominion’ voting machines?

This says it all.

Follow the money…….

“The New York Times reveal that Google owns a 14% stake in the company and is set to pour another $750 million into it this year through a convertible debt deal. In total, Google’s investment in Anthropic now exceeds $3 billion.“

Amazon and its cloud giant AWS estimated the fair value of its stake in Anthropic at $14 billion at the end of December 2026

Anthropic and Amazon have a close partnership that includes an arrangement to use AWS’s AI chips.

Biden’s Department of Justice dropped antitrust action to force Google to sell its investments in artificial intelligence companies, including Anthropic.

https://www.businessinsider.com/amazon-investment-anthropic-worth-14-billion-2025-2

https://techcrunch.com/2025/03/11/google-has-given-anthropic-more-funding-than-previously-known-show-new-filings/

Now that you brought it up.

Pete and President Trump made the right call ……… the AI designers and programmers need to be Constitutional Compliant, no exceptions.

JMO.

A recent statement about the current state of AI: “The most recent AI models make decisions that feel like judgment. They show something that looked like taste: an intuitive sense of what the right call was, not just the technically correct one.”

“judgement” …… “right call”: In the real world from 1776 to 2026, the operative description of those actions by individual freedom loving citizens in our Constitutional Republic with it Constitution ….. are different …… from the “globalist / communist” group think of citizens of other countries, aka China, Russia, etc.

_______________________________________________________________________

The problem: 2026 AI programmers are not Constitutional Compliant ….. currently, they are trampling all over the rights and freedoms of individuals and businesses ….. witness the damages being done in the “suicide chat rooms”.

Heck, they don’t even have to be citizens of the United States to run free among our digital highways and byways causing damage to American companies and citizens.

They should have a Constitutional Qualification License to practice their AI coding that will be accessed within the USA.

Does their code protect American’s Constitutional Rights and Freedoms?

Look what happened when they let every “juan, pedro and jose” from Mexico, or “patel” from India, have a trucker’s license in the blue states …… that gave them the ability to drive the highways and byways of the USA killing innocent citizens because they never passed the tests required to get a trucker’s license.

Secretary Duffy just revoked 17,000 California licenses illegally issued. And he is just getting started.

AI programmers must be qualified to produce code that is Constitutional Compliant if their code is available to Americans.

Now is the time to nip the AI Constitutional Compliant problem in the bud.

This doesn’t even touch on the exposure to our national security caused by non-Americans producing AI code that isn’t Constitutional Compliant but is allowed to be accessed by government departments and employees.

We have just witnessed how federal employees can weaponize the government against citizens ……. can you imagine what harm they can do with AI software that doesn’t protect American’s Constitutional rights and freedom?

As for …… “we have to do it to stay up with the Chinese” …… really?

You are trying to match China’s version of “judgement” / “right call”?

You want to live in a world driven by their values?

Nope, AI in America has to be Constitutional Compliant” not “unconstitutional, big brother compliant.”

MAGA / America First isn’t just our motto, it is our duty!

Need some visibility into the Terms and Conditions included in the actual contract between Anthropic and DoW before anybody can make a single comment.

Not that I like what Anthropic is doing but it would be so easy for the Defense Acquisition Folks to purposely sabotage a contract to create this issue.

Especially those regarding:

Usage

Ownership and Control

Definitions related to AI (yep there is that word again … DEFINITIONS)

…. and LIABILITIES

Especially if this contract came into existence before or early 2025 and do not discount “questionable” actions by members of the Defense Acquisition Community in structuring the contract in a manner that allows the likes of Anthropic and Palintir.

This sentence from the article above indicates Antropic feels it has a strong contractual say on the matters mentioned …. “The core issue is who decides,” Karp says. The issue is not whether the use of the government use of AI is right; the issue is not whether you agree with the mission of the Dept of War. The real issue surrounds who will decide its use.”

Don’t be surprised if there is not a lawfare case brought if the Contractual Terms and Conditions can be used favorably by Anthropic.

Is it just me or is that redundant language by Karp? Use of the use of the use? (Isn’t this dude a brilliant mind?)

Regardless, the use of spying on American citizens must be addressed. Assuming Palantir’s facial recognition technology was used by Biden’s DOJ/FIB on Grandma during J6. Guessing Tom Homan is doing the same to track down illegal rapists & murderers.

The wife thinks this is all 666 Mark of the beast stuff. She’s probably right.

Once the DEI soy boys are in charge again, they’ll have the AI search out us and destroy us …..oh the AI did it, not us!

This AI stuff is all bad news no matter where it’s being placed. It’s already made nearly everything on the internet and social media completely untrustworthy (as if it wasn’t already).

You cannot trust any picture you see, any video you view, or anything you read. It will just get exponentially worse until the entire world we live in is fake top to bottom, created by a bleeping computer bot.

We saw what these tech empires did during covid. What do people think is coming? It ain’t good, I know that much.

Exactly my thoughts about this. When I first heard about AI I thought “now we the people cannot know the real story about anything.” What if the President wants to put out a warning to the people about something harmful? Who will believe it when we have allowed something like AI to be used by anybody who wants to use it? We are stupid people to go along with this. No guardrails.

The LLMs that AI relies on scour selected regions of the internet when giving answers. It is therefore necessary to fill those regions with utter BS.

Anthropic’s goals sound reasonable: no machine trigger-pulling and no citizen surveillance.

Yet we know how Leftists twist stuff. For example, calling the Police State Legal Jihad against PDT the “Rule of Law”.

They may be right, but it would make left-wing programmers the decision-makers, not the People’s presidents.

A swing and a mis, Anthropic.

ps: that reminds of how corrupt Voting CheatWare Companies are given Proprietary Control over the People’s Elections by crooked Soros Election officials.

I support the decision making of the Trump administration in this matter for purely pragmatic reasons.

In a fair, just, and sane world, I would absolutely oppose such a doctrine regarding a weapons use on a pro life, pro human basis.

This is war though. The most dominant moral necessity is to WIN.

If we do not employ such technology, our enemy will then simply employ/deploy it against us.

An evil necessity in an evil world.

Agree – unless someone with a backbone stands up against it. It is up to we the people to sniff this kind of treachery out and come against it.

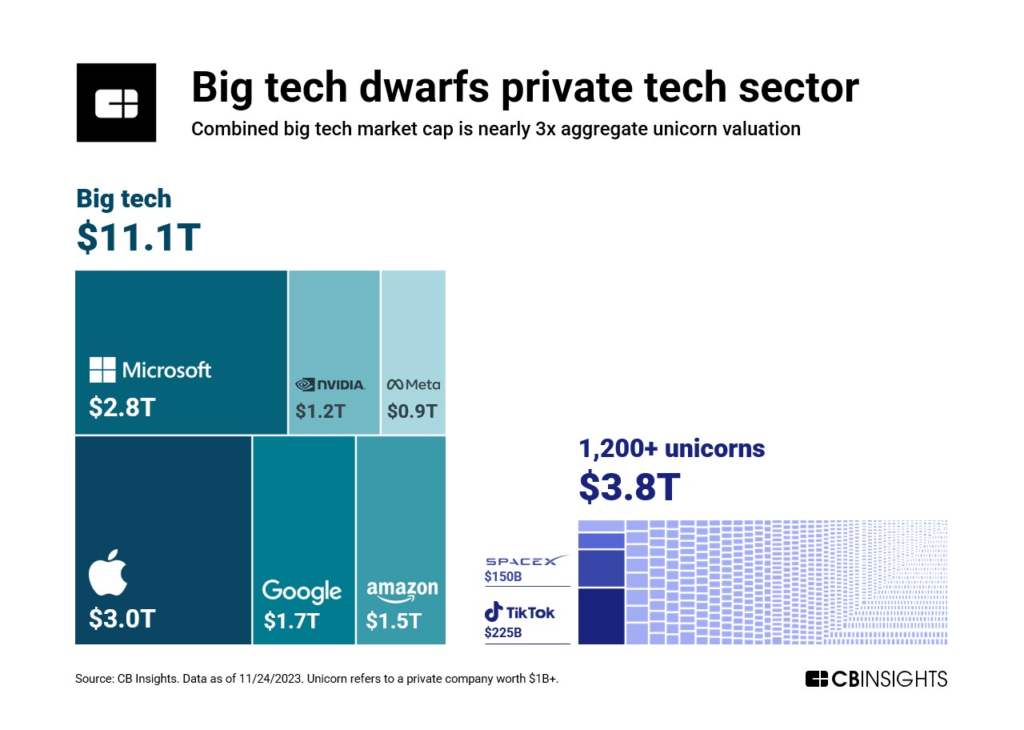

This is the landscape on which the BigTech battles Sundance has been giving us insight into, play out……………………………

Big(Brother)Tech Market Cap vs rest of Tech Sector

__________________________________________

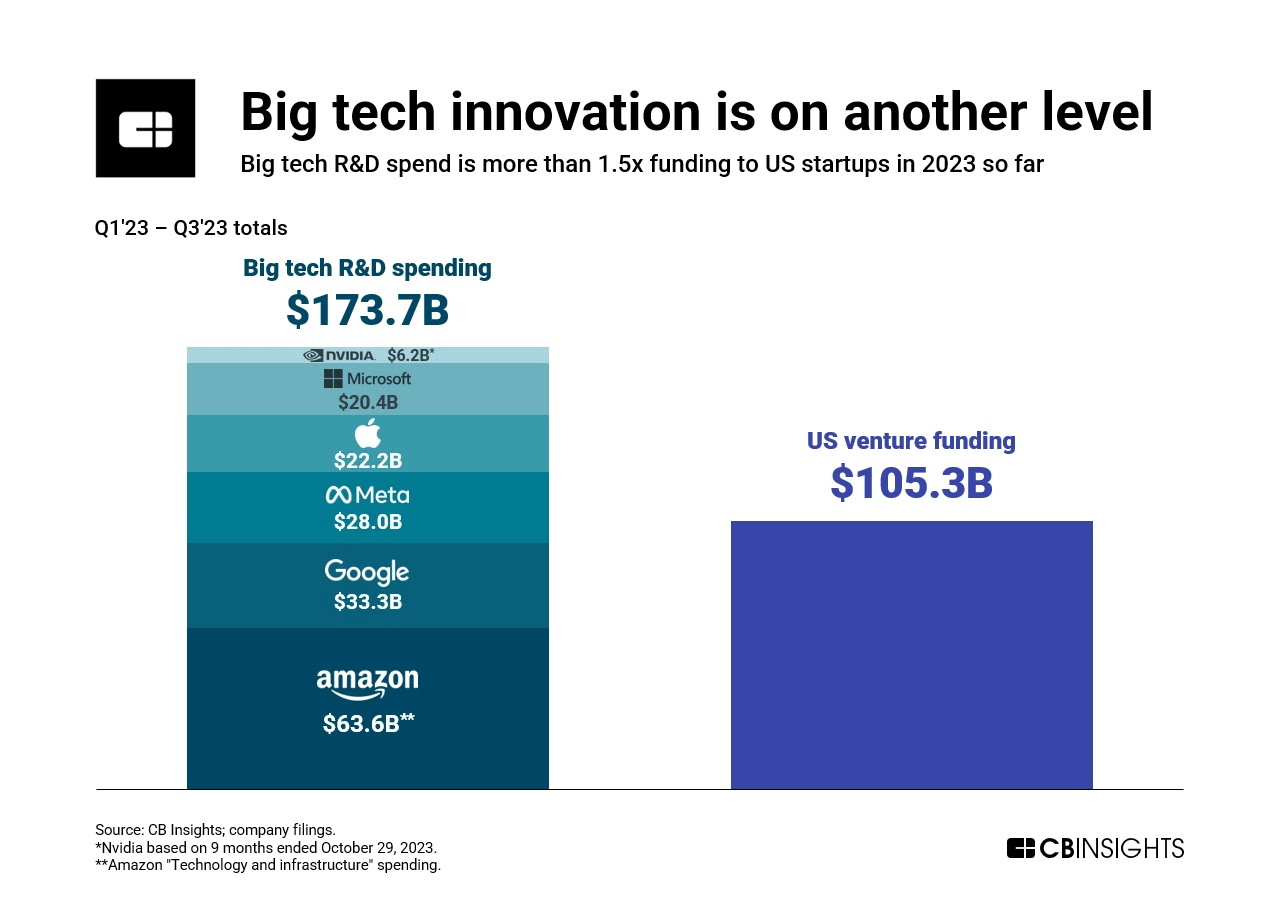

Big(Brother)Tech R&D spending vs US Venture Capital Spending

__________________________________________

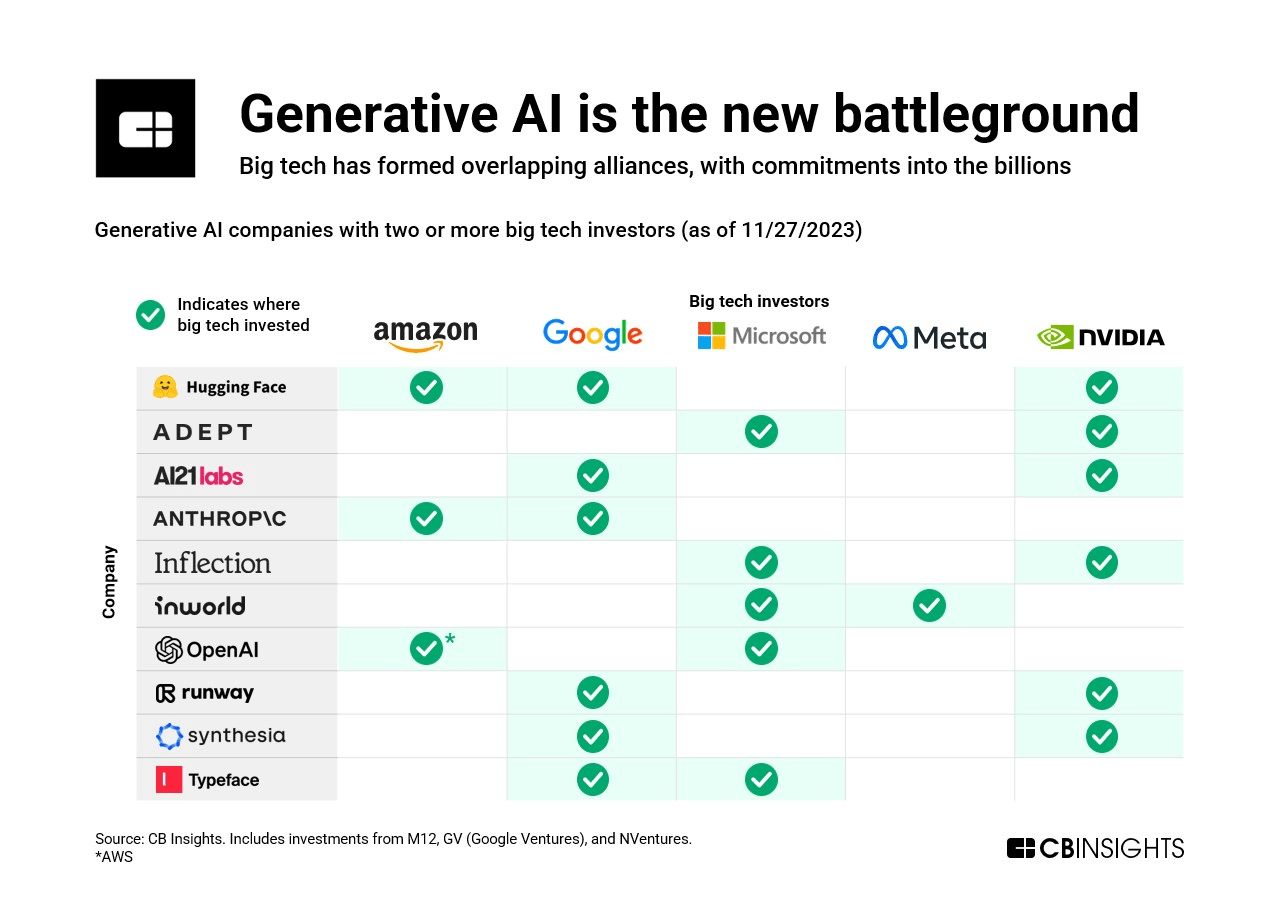

Big(Brother)Tech controls AI…………………..

What does Anthropic gain by taking this stance and losing this government contract? What is their motive? I do know I don’t trust the government. Palantir, Theil,Karp? Not them either. Don’t know enough right now.

The evil which funds BigTech could care less about the contract or even Anthropic itself. That’s all a bit of pocket change for them. They want to retain control of our Government. Trump and Hegseth don’t play well with them.

JustScott hinted at a different BigTech agenda being worked through Anthropic in this comment.

I was told a different perspective by someone whom I have great respect for, “yeah, but Anthropic was demanding access to all the USGov data.”

Now what’s yet to be seen is if the New Tech Oligarchs are really any different than the Old Tech Oligarchs.

Losing this contract furthers their diabolical plan how?

Losing it doesn’t advance the Agenda. Neither does winning it if Trump and Hegseth determine how it’s used.

Moral High ground ?

A Direct say (or influence) in global politics by controlling what they can/can’t do ?

Army combat arms guy here.

The shape of battle in Ukraine TODAY includes autonomous drones looking for pre-programmed vehicle shapes. Requiring human approval of a strike introduces a connection that can be jammed. This is what we could face on the battlefield NOW. The future is just going to be even more dangerous.

Can we have a blue-haired communist tech support guy deciding to de-activate this (and any other) capability just because Bluesky told them America bad?

The US Constitution determines who controls the US military, not some random tech oligarch.

This is the correct decision and it’s not even close.

Putting aside how AI in geopolitical wargames has a predilection to default to nuclear war…. What is your stance on the government surveillance part of this story? Anthropic is seemingly against AI surveillance of US citizens. Layers.

“seemingly” is the key…..

Anthropic is owned lock, stock and barrel by BigTech. BigTech is entirely about mass surrveleince of everyone and everything.

Indeed, Anthropic/BigTech don’t want US Government using Claude for surrveleince of US citizens because that is their monopoly.

As I see it, the decision to allow AI to make trigger decisions was decided by China, which has a world domination agenda.

While I don’t like the idea of AI trigger decisions, that horse has left the barn. And, losing an AI war with China will have far worse outcomes for the US than enabling some government control of AI systems rather than silicone valley control of AI systems.

All that being said, we certainly live in dangerous times.

The door opens for Musk and Grok

Sheesh! The whole world will benefit from the word Sheesh, again! Only the Government could not see this crap coming before it actually arrives. Maybe they are also the enemy. They revealed themselves at the SOTA. They will never do that unless they believe they are winning. They were brainwashed long before they could learn how to use common sense. By the way, both of Obama’s books were based on his own brainwashing.

We are already at war with Communism, wash and repeat, repeatedly.

I cannot understand why it was used in the first instance

do a search “ai claude data breach”

These are the top 4 on my internet search engine

https://www.bloomberg.com/news/articles/2026-02-25/hacker-used-anthropic-s-claude-to-steal-sensitive-mexican-data

https://www.securityweek.com/claude-code-flaws-exposed-developer-devices-to-silent-hacking/

https://www.anthropic.com/news/disrupting-AI-espionage

https://www.packetlabs.net/posts/claude-ai-breach/

lets change the argument around to inspect for another viewpoint.

lets say that DOW decides to go with open AI. And open AI is now used for autonomous weapons.

who decides to live or die? who is accountable when a civilian dies, or just the wrong target?

Bearing in mind that the military already has several different kinds of autonomous weapons systems that “think” and make “decisions” about targeting, go, no go, and even self destruct in the arsenal and have been used in combat. These are not controversial weapons to some. To others, they reflect a growing and accelerating path toward weapons of war that are deployed with effects that are not necesarily reliable and can produce unknown results. There are some examples of this happening such as incorrectly targeting friendly forces. As such most of these weapons do have a human controller, who is at the ready to and can redirect or cause the weapon to destruct. But some of these weapons cannot be “manned” They do literally fly a mission with a general mission guideline, which is far from a restriction to prevent mishaps.

So the question really is this: have these newer technologies been adequately tested to ensure they are effective and can be remotely controlled at least as a measure to prevent the unforseen possibility that the AI onboard is making the proper decisions?

likewise, I am NOT in favor of allowing any kind of AI for the surveillance of human beings. I stand firm on this opinion for the simple reason: Congress continues to ignore the unconcsitutional application of 702. We KNOW there have been million of illegal queries based on NSA hoovering of data from US CITIZENS ON US SOIL. And that is now in the present. Adding AI to this problem is not going to solve it. If anything it is going to be another reason for Congress to justify what we know is wrong, unconstitutional and will give more power to the secret police state.

these are my thoughts on the subject at the moment.

Here is what can change my mind:

show how these weapons are designed with a fail safe.

show how AI cannot be used to circumvent surveillance on US citizens on US soil (looking at you Palantir and other “private” companies.

if I can see how these systems operate and that they have safe guards with real consequences for unlawful use, then I might be able to come around to this notion that AI is going to actually make me safer, our troops more effective, and the mission of combat effective.

God Bless America

“The real issue surrounds who will decide its use.”

That is the real issue.

And without a doubt, I do not want a blue haired person of confused sexuality making any type of decision concerning anything having to do with me or my life AT ALL.

The thought of them having the ability to make such a decision, especially concerning war and using weapons, is ghastly.

💯 👍

This post and the one Sundance gave on the same issue are the best posts of the day.

Anthropics (social) engineers don’t need to get a seat at the cabinet/war room table. They think they can just insert themselves into the decision making chain of command? Such hubris! Govt surveillance can impose itself via any venue any time and that is an unfortunate circumstance of modern tech but national defense must carry on bigger and better whenever possible.

MNN BREAKING…

*BARACK OBAMA: “I NEVER MET THE AYATOLLAH, NEVER HAD ANY COMMUNICATION WITH HIM, NEVER FLEW ON HIS PLANE AND NEVER WENT TO AYATOLLAH ISLAND. HOWEVER, FOR CHARITY WORK, MY HUSBAND DID.”

And he never inhaled, either.

He never exhaled, Raven.

AI is a national security matter one can expect the govt to push toward tighter de facto control in the coming years without crossing into full ownership.

Currently, I trust neither side.

Remember a day or two ago where someone pointed out that 230+billion in stock market was erased in Tech in just 48 hours over a a single blog post related to Claude?

Interesting timing, no?

This says it all:

Yes, it does.

I hope this is just the 1st of many dominoes l9ned up and about to collapse.

What about the back doors the coders leave behind?

I worked for years in IT/IS, and it is not just the back doors within the coding that is problematic, it can be doors left open on the most blasé of networking equipment that can allow evil people “in” to do major damage.

rng

Equipment manufacturers enter basic user IDs and passwords on their equipment.

Companies that purchase the equipment are then expected to know that the first thing they should do before going live is to change that basic user ID and password. Until they do that their system is essentially left open.

Some companies are so small, or so understaffed, or so over staffed with DEI, or whatever that those basic, yet critical changes are never made.

I’ve watched as businesses who believed their systems were absolutely secure, were easily “broken into” through the use of a manufacturer’s user ID and password.

RNG, too, but sometimes it is just poor planning that can take a business down.

Reminds me of a line in The Christmas Carol. In order to get their help, they become the company.

This is a serious blow to the DS/IC shadow Govt.

Man did those f-ers get close this time.

If our President WASN’T President Trump, it would have been over.

Everything turned over to the UNELECTED, worldwide shadow BLOB !!!!!

Thank you Mr. President and Mr. Hegseth for saving the Republic.

a natural human right is the right to contract. feels like we are forgetting that and going to tribalism. pretty sure the dems a nd repubes would immediately flip positions if Trump was a democrat here. I do not want a government that can coerce me into providing services against my conscience. do you.

You are missing the point. The point is not that the government can be trusted. You are correct about that.

Neither choice is good. It’s about playing the odds as to where your best chance lies. Silicon Valley already has shown where their loyalty lies. At least if we attempt to restore our republic with voter integrity and rule of law, there is a chance.

Choose wisely. Absolute power corrupts absolutely. Our government restored to functioning Under God as established is our ONLY chance.

A contract won’t be agreed when either side finds the terms onerous. Now Anthropic doesn’t have to provide the services against their ‘altruistic’ conscience. The freedom to refuse to buy a product is an equally valid decision.

It is much easier to deal with this in China where the government controls everything.

Not a fan of AI. I see it as an effort for total control and surveillance. They want to know everything about everyone, just like China, record every keystroke ever made and everywhere everyone has ever been. Unfortunately, the government wants to know this about us and it should be the other way around. The government works for us, and they are stealing from us. We need this level of accountability on THEM.

Wow. Anthropic thought Pres Trump would cave. A huge miscalculation by any measure.

Right now the CEO is on the phone with Norm Eisen screaming “but you told me they wouldn’t do that!!!!”

Who would name their Ai “Claude”? How gay.

Claude Monet, Claude Debussy, Claude Rains and many others would take issue with that.

Well Larry already announced the plan, “and citizens will be on their best behavior because we are constantly recording, monitoring and reporting everything that is going on.”

Go off script at your peril.

AI was pushed by TRUMP big time. But the dangers are very real. There are times on Youtube, I don’t know if something is AI or a real person. And they have photo’s/video of “real people” talking, but it is AI generated.

This is just the beginning of AI, Elon told us to be VERY careful, it can be used for EVIL! All the BILLIONS/Trillions spend to make super labs with own energy grid, … and plan to make orbital super labs … circling around the globe … is very scary. I know Trump doesn’t want to be left behind in TECH, and allow other countries to develop AI, but , … very dangerous situation. NON-human intelligence growning at light speed. non-humans out-thinking real humans … for last few decades men with wisdom have tried to to WARN THE WORLD by creating … movie/books/etc to alert us of the dangers.

Trump is president now, but in 2 years Leftist Evil Doer

s could gain control of Presidency … like they installed

Biden, through Stealing the election … and Evil Doer will command these AI centers. Think LONG TERM.

The schizophrenic nature of current Ai makes autonomous weapons very unreliable, thus, dangerous.

That is at the core of Anthropic’s cocerns.

The CEO of Anthropic is an absolute certifiable nutcase. It’s almost like a cult. The product is also hot garbage

What if your software starts killing your own soldiers?

There are two sides to this coin. One side advocates for AI to possess all-encompassing knowledge of Americans’ information and to incorporate fully autonomous warfare capabilities into AI-enabled weapons. They assure us that they will monitor this software across their vast data points of entry, claiming to be exceptional managers. This includes any communications that could be spoofed to appear as legitimate commands from the Chain of Command (COC). Consider that: it’s inside the wire, and 70% of the safeguards have been removed.

On the other side, there are those who want to restrict the development of untested AI software that is on the brink of becoming fully autonomous or may already be at that point in a lab setting. For me, the decision about which side of the coin to support is not hard to make. No need to make me call, uncle.

How a billionaire-backed network of AI advisers took over Washington

(10/13/23) FTA – The fellows funded by Open Philanthropy, financed primarily by Facebook co-founder and Asana CEO Dustin Moskovitz and his wife Cari Tuna, are already involved in negotiations that will shape Capitol Hill’s accelerating plans to regulate AI.

Acting through the little-known Horizon Institute for Public Service, a nonprofit that Open Philanthropy effectively created in 2022, the group is funding the salaries of tech fellows in key Senate offices, according to documents and interviews.

Senate Majority Leader Chuck Schumer’s top three lieutenants on AI legislation — Sens. Martin Heinrich (D-N.M.), Mike Rounds (R-S.D.) and Todd Young (R-Ind.) — each have a Horizon fellow working on AI or biosecurity, a closely related issue. The office of Sen. Richard Blumenthal (D-Conn.), a powerful member of the Senate Judiciary Committee who recently unveiled plans for an AI licensing regime, includes a Horizon AI fellow who worked at OpenAI immediately before coming to Congress, according to his bio on Horizon’s web site.

Current and former Horizon AI fellows with salaries funded by Open Philanthropy are now working at the Department of Defense, the Department of Homeland Security and the State Department, as well as in the House Science Committee and Senate Commerce Committee, two crucial bodies in the development of AI rules. …In 2022, Open Philanthropy set aside nearly $3 million to pay for what ultimately became the initial cohort of Horizon fellows.

Moskovitz was also an early backer of Anthropic, a two-year-old AI company founded by a former OpenAI executive, participating in a $124 million investment in the company in 2021. Luke Muehlhauser, Open Philanthropy’s senior program officer for AI governance and policy, is one of Anthropic’s four board members. And Holden Karnofsky, Open Philanthropy’s former CEO and current director of AI strategy, is married to the president of Anthropic, Daniela Amodei. Anthropic’s CEO, Dario Amodei, is Karnofsky’s former roommate.

When asked about the company’s ties to Open Philanthropy or its policy goals in Washington, spokespeople for Anthropic declined to comment on the record.

Anthropic is fast emerging as another titan of the young industry: Amazon is expected to invest up to $4 billion in a partnership with Anthropic, and the company is now in talks with Google and other investors about an additional $2 billion investment.

In a statement, Moskovitz pledged that any monetary returns from his investment in Anthropic “will be entirely redirected back into our philanthropic work.”

https://www.politico.com/news/2023/10/13/open-philanthropy-funding-ai-policy-00121362

A quick wikipedia search shows that from November 2014 until October 2015, Dario worked at Baidu, a Chinese multinational technology company specializing in internet services and AI.

In 2014, Baidu also appointed Dr Andrew Ng ( cofounder and head of Google Brain and board member of Amazon) as chief scientist to lead Baidu Research in Silicon Valley and Beijing.

Anthropic said they will not honor their contracts with the US government. OK, guys, you just lost those contracts. Buh-bye!