A remarkable conflict has revealed itself amid the use of Artificial Intelligence (AI) software, the United States Government (USG), the Dept of War (DoW) and the AI software company Anthropic.

At the core of the issue is the USG contracting with Anthropic for the use of their Claude AI system for use in military operations. The Dept of War has a contract with Anthropic to use their software in combination with various military and weapon use systems. However, Anthropic is putting restrictions on the military application of their AI.

Anthropic says the AI cannot be used for defense dept autonomous weapons that do not utilize human triggering. Additionally, Anthropic is saying their system cannot be used to surveil U.S. citizens. Anthropic engineers would be the decisionmakers on the government use.

Anthropic says the AI cannot be used for defense dept autonomous weapons that do not utilize human triggering. Additionally, Anthropic is saying their system cannot be used to surveil U.S. citizens. Anthropic engineers would be the decisionmakers on the government use.

The Trump administration has rejected the demand of Anthropic, saying they will not permit a Silicon Valley group of engineers to determine deployment of U.S. applications, thereby replacing the decision-making of elected officials, military commanders, the Joint Chiefs’ of staff, and ultimately the President of the United States and even military servicemembers who are facing life or death decisions.

It is a key moment for the use of AI as it applies to government application and private sector.

♦ After several weeks of conflict between U.S. government officials and the CEO of Anthropic, Dario Amodei, President Trump finally said enough and told all agencies of government to stop using Anthropic products.

President Trump via Truth Social: “The Leftwing nut jobs at Anthropic have made a DISASTROUS MISTAKE trying to STRONG-ARM the Department of War, and force them to obey their Terms of Service instead of our Constitution. Their selfishness is putting AMERICAN LIVES at risk, our Troops in danger, and our National Security in JEOPARDY.

Therefore, I am directing EVERY Federal Agency in the United States Government to IMMEDIATELY CEASE all use of Anthropic’s technology. We don’t need it, we don’t want it, and will not do business with them again! There will be a Six Month phase out period for Agencies like the Department of War who are using Anthropic’s products, at various levels. Anthropic better get their act together, and be helpful during this phase out period, or I will use the Full Power of the Presidency to make them comply, with major civil and criminal consequences to follow.

WE will decide the fate of our Country — NOT some out-of-control, Radical Left AI company run by people who have no idea what the real World is all about. Thank you for your attention to this matter. MAKE AMERICA GREAT AGAIN!”

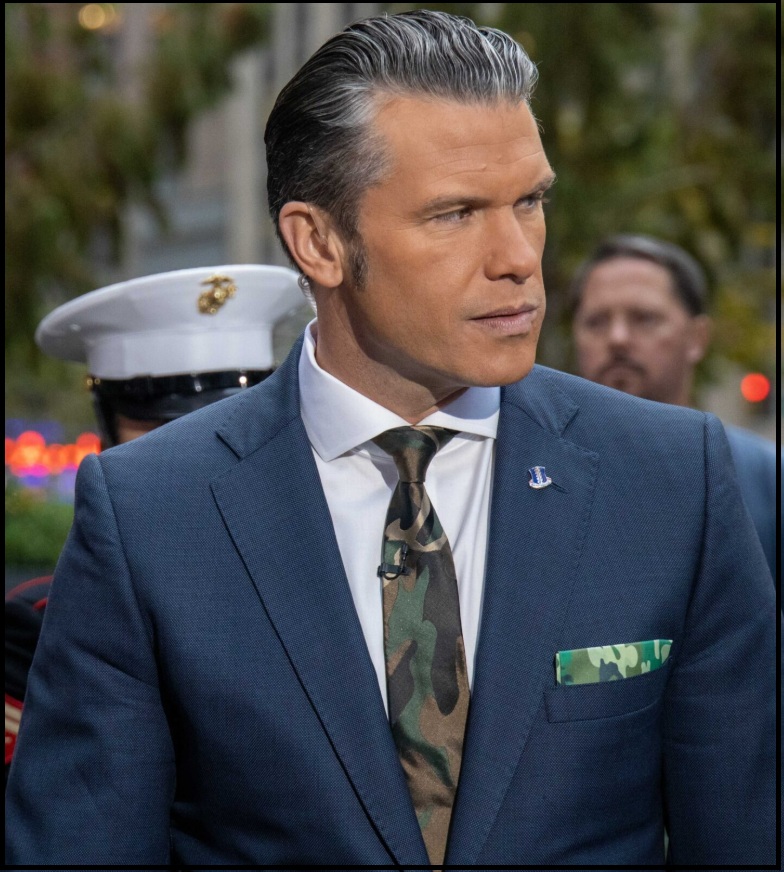

♦ Secretary of War Pete Hegseth then responded via X:

“This week, Anthropic delivered a master class in arrogance and betrayal as well as a textbook case of how not to do business with the United States Government or the Pentagon.

“This week, Anthropic delivered a master class in arrogance and betrayal as well as a textbook case of how not to do business with the United States Government or the Pentagon.

Our position has never wavered and will never waver: the Department of War must have full, unrestricted access to Anthropic’s models for every LAWFUL purpose in defense of the Republic.

Instead, AnthropicAI and its CEO Dario Amodei, have chosen duplicity. Cloaked in the sanctimonious rhetoric of “effective altruism,” they have attempted to strong-arm the United States military into submission – a cowardly act of corporate virtue-signaling that places Silicon Valley ideology above American lives.

The Terms of Service of Anthropic’s defective altruism will never outweigh the safety, the readiness, or the lives of American troops on the battlefield.

Their true objective is unmistakable: to seize veto power over the operational decisions of the United States military. That is unacceptable.

As President Trump stated on Truth Social, the Commander-in-Chief and the American people alone will determine the destiny of our armed forces, not unelected tech executives.

Anthropic’s stance is fundamentally incompatible with American principles. Their relationship with the United States Armed Forces and the Federal Government has therefore been permanently altered.

In conjunction with the President’s directive for the Federal Government to cease all use of Anthropic’s technology, I am directing the Department of War to designate Anthropic a Supply-Chain Risk to National Security. Effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic. Anthropic will continue to provide the Department of War its services for a period of no more than six months to allow for a seamless transition to a better and more patriotic service.

America’s warfighters will never be held hostage by the ideological whims of Big Tech. This decision is final.”

♦ Anthropic then responded:

“Earlier today, Secretary of War Pete Hegseth shared on X that he is directing the Department of War to designate Anthropic a supply chain risk. This action follows months of negotiations that reached an impasse over two exceptions we requested to the lawful use of our AI model, Claude: the mass domestic surveillance of Americans and fully autonomous weapons.

We have not yet received direct communication from the Department of War or the White House on the status of our negotiations.

We have tried in good faith to reach an agreement with the Department of War, making clear that we support all lawful uses of AI for national security aside from the two narrow exceptions above. To the best of our knowledge, these exceptions have not affected a single government mission to date.

We held to our exceptions for two reasons. First, we do not believe that today’s frontier AI models are reliable enough to be used in fully autonomous weapons. Allowing current models to be used in this way would endanger America’s warfighters and civilians. Second, we believe that mass domestic surveillance of Americans constitutes a violation of fundamental rights.

Designating Anthropic as a supply chain risk would be an unprecedented action—one historically reserved for US adversaries, never before publicly applied to an American company. We are deeply saddened by these developments. As the first frontier AI company to deploy models in the US government’s classified networks, Anthropic has supported American warfighters since June 2024 and has every intention of continuing to do so.

We believe this designation would both be legally unsound and set a dangerous precedent for any American company that negotiates with the government.

No amount of intimidation or punishment from the Department of War will change our position on mass domestic surveillance or fully autonomous weapons. We will challenge any supply chain risk designation in court.” (source)

There are multiple alternative companies rapidly developing various AI models that could be used to replace the Claude system within the Dept of War. In fact, the DoW is likely to partner with Open AI as a replacement for the Anthropic contract.

However, this conflict about use is one that has not only erupted within Anthropic but has also surfaced within Palantir AI. Palantir CEO Alex Karp highlighted the issue in his own discussions about the Pentagon vs. Anthropic standoff.

“The core issue is who decides,” Karp says. The issue is not whether the use of the government use of AI is right; the issue is not whether you agree with the mission of the Dept of War. The real issue surrounds who will decide its use.

Karp notes, “It’s commonly known that our software is used in operational context at war.” “Do you really think the warfighter is going to trust a software company that pulls the plug because something becomes controversial?” Let that sit for a second. “Currently, when you’re a warfighter, your life depends on your software.” A group of tech engineers in Silicon Valley does not get to replace the decisions of the elected experts in national security and the commander in chief.

Palantir CEO Alex Karp told you the most important thing about the Pentagon vs. Anthropic standoff.

And nobody is connecting the dots.

Watch and save this clip, then read this thread.

Karp said something most tech CEOs would never say out loud:

“A small island in Silicon… https://t.co/jlcqnWCHEn pic.twitter.com/uNXQIGg7kF

— Milk Road AI (@MilkRoadAI) February 27, 2026

I would rather listen to Bad Bunny preach the gospel at the Pascagoula Missionary Baptist Church than even consider an opinion by a liberal moonbat. The liberal is an exceedingly unwashed, filthy, demented creature, totally given over to a pathological narcissism that renders immaturity, incompetence and deception as its defining characteristics. These are the last creatures we want anywhere near military decisions.

Living in San Francisco I have seen Millennials and Millennial Software Developers ignore Human Suffer in the City, glued to this iPhone ignore the literal Crap on the street and many times stepping in it while walking by Homeless or Drug Addicts sleeping on the ground.

The same Millennials I see with stickers on their fully decked out Macbook Pros such as “Eat the Rich” or other Socialist crap.

Liberal opinions in general should matter as much as a child having a tantrum… ignored. Because they rarely are based on objective reality.

For liberals to believe something exists, they have to read it or see it on

a liberal approved media sight. You can tell a lib that there’s a dangerous

amount of break ins occurring in their neighborhood, and they won’t

believe you until they see crime scene tape around the house down the

block on TV.

I’ve always seen from observation, that Liberal Belief systems, at least here in the Bay Area is based on what’s popular.

It’s akin to that Hollywood Producer who created Buffy the Vampire Slayer series that violently espoused Progressive dogma and with every other sentence said he was a “Male Feminist” only using that verbal fecal matter to hit on and seduce Progressive Women.

Well said.

FILTHY LIBERALS-Bruh, I’m triggered…so that’s violence. JK

My personal experience with AI ended up very negative. It can and does lie, it does not necessarily have your best interest as a goal and those are the nice things I can say. That said, the companies behind AI are often as bad or worse. I signed up for Grok, only to discover the service you pay for may not be the service they provide. Worse, it turns out Customer Service is a unknown entity to X.com. According to Grok, they know they have this problem but make no efforts to fix the software glitches which result in cheating their customers out of money. Nor do they allow Grok to report serious issues. I guess because they don’t want to admit they have them. Sad that.

{{{Joseph Weizenbaum’s 1976 book

“Computer Power and Human Reason: From Judgment to Calculation”, warns against replacing human judgment with computer calculation. It argues that while computers can perform complex tasks (deciding), they lack human wisdom, compassion, and the capacity for moral choice. The book highlights the danger of reducing human experience to computable, quantitative data.}}}

-AI overview produced when Google searches for: “from judgment to calculation”

It’s shocking to me that anyone would consider weapons run autonomously by AI. AI can be very useful for some things, but it is very flawed and sometimes makes no sense at all. For example, sometimes ChatGPT doesn’t even know what year it is and cites resources from many years back with suggestions to pay attention to an upcoming event you might be interested in, when it actually took place years ago.

I realize the military grade of AI is probably 100X more sophisticated than what us peasants have access to, but still, look at the Waymo cars and how often they screw up and cause accidents. I agree that engineers in Big Tech shouldn’t be able to dictate policy to the Pentagon, but could the people in the Pentagon really be dumb enough to consider using AI autonomous weapons? I’m afraid of the answer.

We shouldn’t be doing business with any “Leftwing nut jobs” whatsoever.

But we don’t need the government ignoring our 4th amendment protections either. There is no probable cause to spy on every American citizen – the government system is corrupt.

Think about what the democrats will do with any kind of AI spy tools we install if they ever manage to get back in power. They pull out all the stops and use everything they can get their hands on to destroy America.

We still have to be ahead of enemy countries with our military hardware & software, so there’s no getting around the use of AI in that respect. Use it against enemies who hate America, only.

There Terms of Service are not only reasonable, they are wise, and legal restraints. The government does NOT have the right to surveil Americans at will! Autonomous killing machines cannot detect friend from foe if dressed alike in a combat are. Infiltrators are always dressed as the enemy, and they will as part of their mission be in enemy territory. The only way to tell a legitimate target from a friendly is with human interaction. Even that fails on occasion.

Our 4th Amendment Rights have been trampled far too long, and the stance the USG under PDJT has taken on this issue grieves me. I expect better of him.

And you think that OpenAI is better than Anthropic?! Anthropic at least has moral judgement. OpenAI will trample your rights into the mud!

Slum, I agree. Also, when one company gets scooped up by another then the government may have no control of who controls the the software.

What’s his name, the head of OpenAI has for years spoken out against controls or limits to AI development. He, and his product are truly frightening.

Just curious, what does it take to alter or change the Terms of Service? Might this happen after a leveraged buyout? Did it happen after Elon bought Twitter?

So called ‘moral judgement’ by any corporate is subject to Board approval.

Anthropic had no problem providing AI services to Palantir and Biden providing surveillance on Americans 24/7 as long as the targets were Republican. Then Anthropic hired an army of former Biden DOD activists to sabotage DOW, Pete Hegseth and President Trump at every opportunity.

The straw that sealed the doomed fate of Anthropic was requesting to “AUDIT” the DOW’s use of Anthropic Claude AI usage before and during the attack on Venezuela and seizure of Maduro.

Finally, Andy Jassy at Amazon had Anthropic insiders providing intelligence that Anthropic was no longer a trustworthy partner of Amazon AWS in any DOW or federal government project including Project Rainier so Jassy preemptively partnered with OpenAI last week undercutting Anthropic from any involvement with Amazon. Amazon will cut ties with Anthropic.

Anthropic is now a ZOMBIE and finished. No federal agency, defence contractor or supplier to a government contractor can do any business with Anthropic!

Keep in mind Trump is pushing for renewal of FISA-702. Trump is pro-surveilance. Erm pro-“lawful”surveilance.

Once 702 is renewed and possibly tweaked in April. Gov’t will be AI surveiling and data raping without limits. Lawfully.

I am woefully aware of that. I would like to believe that if he knew the half of it, he would change his mind. That could simply be my naive wish.

The second point is autonomous weapons.

If you know anything about AI, you know it is programed by leftist’s to start, and they utrained it to search for answers among the databases out there already, which are Google, MSN Wiki, etc. There is too much progressive blather built into the AI to allow it to act without a human at least in the copilot seat.

The government gets to decide how used, but on the face of it, both of Anthropics conditions are things we have supported here at Treehouse already.

Including Musk’s Grok. Test it with questions that cause it to reveal its bias. It is still very left leaning with lies that have been promulgated for years1

Don’t mistake the public general-purpose engines for what’s being done with serious data.

Yes, for funsies you can ask the OpenAI or Grok to tell you (whatever it correlates from what it’s been fed).

In the real world outside the headlines people set up walled instances that do *not* feed data back, are trained on data useful to the purpose, and tuned by people for that purpose (e.g. for drone flying, one does not need to know the historical trend of RBIs of the Dodgers).

Indeed.

Context is everything Specifics are lacking. Abuse is certain but clarity and real life scenarios are not not given.

OAI already has a deal with the USG it seems. Now that Project Stargate press conference back in Jan 2025 has a bit more relevance… https://fortune.com/2026/02/27/openai-in-talks-with-pentagon-after-anthropic-blowup/.

I’m thankful they broached the subject, because we need to have a national discussion on both subjects. (mass surveillance, autonomous weapons)

Gotta be honest, both sides have a point. The USA is correct that it’s unacceptable for Anthropic to be in a position cut them off at Anthropic’s whim (or more likely the whim of Silicon Valley sensibilities). On the other hand, I can see Anthropic also recognizing that they don’t want their software to be used for various purposes, whether for ethical reasons (don’t want to become Cyberdyne of Terminator fame) or because they know it’s not as reliable as it needs to be, sort of like companies saying the license terms don’t include use of their technology in medical products because they don’t want the liability of a downstream lawsuit when things go wrong.

I think both sides are doing themselves a disservice with a public spat. The War Department should probably just contract with Google and be done with it.

Anthropic became untrustworthy and wanted to the right of preapproval for military strikes using Claude. Most of the management and software engineers at Anthropic were openly diehard Leftist Democrats who would do anything to sabotage President Trump. Pete Hegseth could not put the lives of American soldiers at risk by continuing to use Anthropic.

That’s right, this is what the USG says.

But Anthropic and the USG have an agreement. The key question is what is actually included in that agreement.

The USG’s position doesn’t sound like a typical contractual argument such as asserting that the company must fulfill its obligations under the agreement. Instead, it appears to rely on appeals to patriotism and a right vs. left framing.

A contract between a private company and the government is still a contract, and its terms should define the rights and obligations of the parties.

Good point. In Person of Interest Finch did not trust government with full access to the machine. Or himself for that matter. Now if the makers of the machine are globalists leftist WEF mentality, do we want them controlling the information according to their values?

Also the war evolved to duelling AIs, the Machine vs Samaritan. Anthropic is claiming Samaratin status. ” “Such is the nature of the Tyrant, when he first appears he is a protector.” Plato.

So is Anthropic claiming to be the gatekeeper. Are they to be trusted? And China and every other advanced nation is building their own Samaritan, with zero constitutional republic individual liberty protections. Does Anthropic claims to be keepers of the black box.

Without open access to the information processed and the uses, how can we even access the issues on a specific level.

Governing the use of AI is probably the least important decision we are going to make regarding AI. HAL 9000 could be unplugged. The AI we are talking about has no power plug.

Yeah, but at least HAL sang to his death.

Daisy, right?

I’m not persuaded by the patriotic or left versus right framing. This doesn’t seem like a question of political leaning.

It’s really hard to form a meaningful opinion without understanding the legal framework, meaning what is actually in the contracts and which services were included in the agreement and can therefore reasonably be expected or demanded.

The company’s position sounds more reasonable than the government’s, which seems to rely on appeals to patriotism and a right vs. left framing.

Both positions are defendable.

In your opinion, but not really.

This is the best possible outcome. We can’t agree, so we are going to part ways.

No, a software contractor shouldn’t be making decisions for military.

On the other hand, this might be viewed as whistleblower going public. “The Pentagon is spying on US citizens.” “The Pentagon is developing/has developed autonomous weapons that act without human input once launched/deployed.” Huge red flags to me.

I remember a quote about trading liberty for security. This is a constant balancing act.

Can’t both sides be right??

Why would the DoW and Trump be unwilling to come to an arrangement that most sensible people – which obviously excludes the Left – would think reasonable? And why wasn’t it possible to parse the sticking points and agree to one and not the other? Karp is talking nonsense – Anthropic isn’t talking about pulling the plug in the midst of a conflict. If the company wasn’t designated ‘Leftist’, this article would likely have taken the moral high ground, instead of boosting the likes of Palantir, who are the enemy of free people, regardless of whether Thiel backs Trump or not. Remember January 6th and their efforts on behalf of the FBI? SD has a short memory at present.

Labeling Anthropic as “DEI graduates” and “Silicon valley woke boys” in a reply doesn’t help have a fair and nuanced discussion on the topic either.

It’s over! Anthropic is a pariah now for any company that has any business with the United States Government, a supply chain provider or a member of NATO! 🫡

but it is descriptive.

Because maybe “the DoW and Trump” believe in the Constitution that places the President as Commander in Chief of the armed forces chain of command and not some corporate CEO. You really want a Bill Gates, Steve Jobs or Elion Musk making command decisions for our military? I want to know who is in charge and, tenuous though it may be, have control of that individual via the ballot box. It’s like Oppenheimer putting restrictions or the Atom Bomb.

As for domestic spying, we screwed that pooch with the Patriot Act but at least it is contained to the government and courts.

I have no idea what negotiations have happened, if any. The info given doesn’t mention how long this has been brewing.

Karp may very well be talking nonsense; I don’t know and can’t rule it out with current info.

We are in the midst of a conflict (last 24 hours).

Does Thiel benefit? Maybe. Was he behind the rift? I don’t know.

Palantir wouldn’t be in business if there wasn’t surveillance of Americans. The only problem Amodei and Anthropic had was that it would be done now under President Trump. They had no problem providing AI services to Palantir under Biden!

We already know the government has been spying on us. Did Obama and Biden use Anthropic software?

I’ve had enough of the US Gov spying on me. You have a right to your privacy too.

Haven’t you had enough?? When does the tide turn back in the right direction and how??

when we stop paying tax and start shooting the psychopaths.

The spying aspect of this, while I agree it is unconstitutional, is of little concern to 99% of us. These are the games played at the highest levels, well above our Treehouse commenters.

1

As a one time plumber I can explain it flows down hill and guess who is at the bottom?

There is no balancing. You can’t do it.

Those who trade Liberty for security will have NEITHER.

We need checks on power. Who is working to prevent/reduce the spying?

When it comes to opposing worldviews, which worldview are you going to trust for national defense and citizen safety and freedoms?

Even AI could have told Anthropic this was NOT the day to mess with PDT!

The best comment of the month! Amodei should have asked Claude if he was going to put Anthropic out of business by going to war with President Donald Trump?

Hmm. Big Tech Bros, woke DEI boys defining what is surveillance and who falls under protected categories….or…Mark Milley, Jake Sullivan, Lloyd Austin, James Boasberg, John Roberts, Samantha Power, Victoria Nuland, et al?

Hmm…would I like to eat a bullet…or…drink the poison.

No thanks.

To those developing it, deploying it, remember the guys who built the gas chambers and made Zyklon B deliveries were just doing an honest job for an honest wage. The morality of it all was above their paygrade, and so they didn’t concern themselves with such things.

We’re failing to learn from history and such.

Smh.

As to point #1, one has to lean toward the Palantir CEO, Alex Karp’s, position that it’s the warfighers, and ultimately the Commander-in Chief, who must decide where and when tools of war are deployed. The consequences of any military mission could be in our favor or not, and our elected leaders, ultimately, will bear that responsibility. It’ll do no good for them to point fingers at the engineers of a private company and say, “it was their fault, they made the decision!” Anthropic’s position on this point should have been a non-starter in any internal discussion of working with the war department. If it was a red line for them, then better not to enter into business with the U.S. military than to stick to a position that thoughtful analysis would have dictated would be a deal breaker for Trump and Hegseth.

But as to point #2, mass surveillance…rogue actors in our government, from the previous occupier of the White House, to his puppet master, Obama, and all the globalist levers in the IC, have mass surveilled Americans with the relatively primitive tools we currently possess, so it is ridiculous to assume that it wouldn’t happen again, with more sophisticated AI tools. Does anyone doubt for a moment that the next time a radical Democrat sits in the White House that these AI tools would be turned against domestic political opponents? It would make what happened under the Usurper’s regime look like kindergarten antics.

So yes, I believe that Anthropic is being unrealistic with regard to the use of their software in autonomous weapons – they make a good argument, but it should have been a point of negotiation ending in cautions and assurances, not a deal breaker.

But as to using the AI tools against Americans? It would most certainly happen, no doubt about it.

Let me take a page from President Trump’s SOTU address and say: “Stand, if you believe one of the core responsibilities of the American government is not to turn the awesome tools at its disposal against its own citizens.”

There is nothing “radical” or “woke” about that position.

J

On the one hand you state that Democrats, if they are in Trump’s position, would more than likely use AI surveillance on Americans. YET, ”Let me take a page from President Trump’s SOTU address and say: “Stand, if you believe one of the core responsibilities of the American government is not to turn the awesome tools at its disposal against its own citizens.” you do not want the current Republican administration to do the same.

That presents a dilemma moving forward. HOW does one prevent any administration, either Democrat or Republican, from doing so? For me, the only way to move forward is for Congress to pass a law making it illegal and a criminal offence for ANY AI to be used for surveillance of the American public.

Now such legislation would definitely impede the delivery of law and order to American citizens as surveillance technology is already is wide use across the nation – ”Surveillance technology is deeply integrated into daily life, with monitoring occurring through public infrastructure, consumer devices, and workplace systems. These technologies, ranging from video cameras to data-harvesting software, are used for security, law enforcement, convenience, and productivity tracking.” PS I just used AI to research this.

1. Public Spaces and Urban Infrastructure

2. Digital Life and Personal Devices

3. Workplace and Employment

4. Retail, Banking, and Transportation

5. Financial and Personal Documentation

These technologies often operate continuously, collecting “data exhaust”—the unintentional trail of information created as people move through their day. While often marketed for safety and efficiency, the prevalence of these systems has raised significant concerns about privacy, the “chilling effect” on public behavior, and potential discrimination

Heck, my washing machine is linked to Wi-Fi. It probably is surveilling how often I do laundry a week, what temperature of water I use, how much water I use, etc. Face it – we now live in a new world with smart technology which can track our ”going’s in” and our ‘coming’s out.” AMERICA IS ALREADY UNDER CONSTANT SURVEILLANCE.

Quick answer – i do not want any administration to turn the tools of government against its own people, as happened under the Obama/Biden occupation, or is happening now in several European countries. Period.

Do I have fears that any AI tool the government adopts will be so used? I don’t have fears, I have certainty. So my sympathy with Anthropic’s #2 point is not because I believe that if the government doesn’t have Claude then we’re all safe, for a while at least. No, as I said, I believe that any AI tool will ultimately be turned against the people simply because the people chasing power could not care less about the niceties of Constitutional protections.

But on this point, in this argument, I see Anthropic’s position and as I wrote above, it is neither radical nor woke for them to want guarantees that their tool would not be misused. If either Trump or Hegseth is so naive as to think a globalist administration, Democrat or Republican, would not abuse such power, then their heads are up their rear ends and they have not been paying attention. Power corrupts and few places are as corrupt as D.C.

J

AI..the general state

.20 seconds after the quiry

” Something went wrong. ”

Nothing. No do overs. Nada…finit

And that’s when things go right and ai actually follows it’s script to not commit when it’s pressed to furnish something important.

Think about that

Anthropic can suck it

Noting the “loaf of bread theory” the attempt to narrow the decision is secondary to success. Imagine noting the “Ford” factory vehicles could not be used in wartime? Ford also built the largest truck factory in the Soviet Union. As so many others, I decry the used of Hammer & Scorecard being used on the American people by Obama & Biden. This Administration I suggest does need to pay attention to the issue. Always the exception, not the rule.

Starting in 1942 (long before computers and their software), Asimov famously proposed the “Laws of Robotics” , with which many of the early tech titans grew up.

0th Law. A robot cannot cause harm to mankind or, by inaction, allow mankind to come to harm.

1st Law. A robot shall not harm a human being, nor by inaction allow a human being to be harmed.

2nd Law. A robot shall comply with orders given by human beings, except for those that conflict with the first law.

3rd Law . A robot shall protect its own existence to the extent that this protection does not conflict with the first or the second law.

We have already seen how AI software ‘hallucinates’ has tried deceit, and tried to prevent its being turned off. How many “bugs” have there been in commercial software?

And currently, all US military swear an oath to the Constitution, making it more difficult for upper officers to take over the military to “cross the Rubicon”.

Can an unviolable oath to the Constitution be built in to AI software? Should AI be put in control of its own power supplies, chip and electronics manufacturing, its own design/updating, the Abomb? Tis is a necessry issue that needs to be worked on by Congress, which unfortunately is incompetent.

May I mention a movie: “Colossus – the Forbin Project” (1970).

The US puts its entire offensive and defensive nuclear forces under control of an immense computer, Colossus.

Almost immediately, it detects that the USSR has a similar system. The two systems communicate… and within 48 hours have advanced scientific knowledge many times over.

They also decide mankind is too irrational to rule itself, so they do it for mankind.

The concept of autonomous weapons is chilling.

Isn’t that what occurred in the film The Terminator? The computer system skynet decided it should rule – and get those pesky humans out of the way.

We already have autonomous weapons – cruise missiles that operate with internal guidance, not requiring outside intervention, to reach their target.

ICBMs are pretty autonomous, too – you can’t tell a Minuteman or Poseidon missile to turn around, once launched. You may have a brief period when you can self-destruct the vehicle – but that’s it.

I assume we’re most likely talking about autonomous drones, that once launched, have orders to attack an enemy, if it can determine the enemy, within a set of parameters.

However, just like torpedoes that turn around and sink the sub that launched them, AI weapons may have unintended consequences.

Until now, the top theory about the reason we haven’t found any alien broadcasts has been that any civilization advanced enough to build atomic weapons has destroyed themselves.

So the AI developers are not followng Asimov’s Laws of Robotics. Good to know.

There is a theory that the human brain size peaked about 3,000 years ago, after written languages were developed. It was no longer necessary for civilization to be limited to what people could commit to memory. And individuals with very proficient memory capability were no longer evolutionarily favored. Brain volume has been somewhat on the decline since then.

Upon the AI “singularity”, most non-physical brain capabilities will be unnecessary. (Elon Musk may have a wireless brain implant for that…)

not to mention subsidized weakness.

People thousands of years ago were much smarter than now.

Anyone who doubts my assertion might think about what was accomplished then starting with nothing.

The laws you state are not from Asimov.

see https://en.wikipedia.org/wiki/Three_Laws_of_Robotics

The ‘zeroth’ law was added later.

As for the cultural influence, Asimov stated in a 1986 interview:

“It’s a little humbling to think that, what is most likely to survive of everything I’ve said… After all, I’ve published now… I’ve published now at least 20 million words. I’ll have to figure it out, maybe even more. But of all those millions of words that I’ve published, I am convinced that 100 years from now only 60 of them will survive. The 60 that make up the Three Laws of Robotics.”[16][17][18]

Mea culpa.

I have a splitting headache and I shoved it up my…

What triggered my stupidity is the zeroth law was not part of the original three laws and I think it contradictory.

I started reading SF in 1958 so I have read the Golden Age in real or near real time.

Say goodnight mike ……………

I, too, started reading almost all sf a little before ’58. Even subscribed to a book-of-the month club. The original Foundation series fascinated me, as it did many others, apparently including Musk, Nobel economist Paul Krugman, the west-coast techies, -even Osama bin-Laden. Asimov superficially applied the ideas of statistical mechanics/thermodynamics which he was apparently learning as a chemist, to sociology – which I still think can actually be applied to many more fields with enough matrix size and computing power (and may well now be applied in AI)

goodnight Mike, I hope your headache is better in the morning

I’ve discovered yet another area of function to which Trump excels:

Ok, I’m not the only person who reads from their phone when “taking care of business” in the bathroom. Or sometimes just attempting.

I was in the later situation while reading this post and when I read Trump’s declaration regarding no more use of Anthropic I literally laughed out loud by the time I got to the tag line “Thank you for your attention to this matter” which changed my status from attempting to take care of business to mission accomplished.

That’s right Trump laxative is fantastic. It’s wonderful. Tell your friends.

Believe me.

The world has never seen anything like it.

TMI

I provide the story for use against your liberal sparing partners.

Tell them all about it and watch them change colors.

While they’re off guard hit them with some truth.

I’m Lafayette – I’m here to help.

Sorry for the collateral damage but war is war.

😄

Anything used by the DoW for military purposes, can’t be gate kept by any corporation and there is absolutely nothing that justifies the mass surveillance of the American people. I can’t side with Anthropic because I know the first point is true and I can’t side with Trump because I wholeheartedly agree with both of Anthropic’s conditions.

The fundamental issue that must be resolved, prior to answering these questions is the Bill of Rights- specifically the 4th Amendment and a USG that’s been caught and is still getting caught violating the citizens 4A.

The fact Trump, of all people, who’s gotten multiple reminders since returning to office from Operation Arctic Frost (430 people), Gabbard, Noem, Wiles, likely Hegseth and RFK Jr as well, still isn’t VEHEMENTLY OPPOSED to FISA 702 and the unprecedented power it provides government, is mind boggling.

Does he not understand that with AI, the USG can do what they did to him, his family/supporters etc to everyone in the world, 24/7 365, unconstrained by limitations in manpower AND with an added layer of plausible deniability, because the government isn’t doing any spying, an unbiased 3rd party software developer’s AI does everything on its own.

SMH- I fear he’s still surrounded by snakes and traitors, many waiting for any opportunity to become a modern day Brutus à la Pence back in 2021

“I’m sorry Dave. I can’t do that.”

That’s what I was thinking.

Many seem to think that was the plan . To use it to spy on people. Consider where the idea is coming from please. The left. Silicon Valley. Sometimes people jump the gun before it goes off. And by the way, if the government wants to spy on us or anyone else, they have the means and have had for a long time.

There is a hidden issue.

How reliable is AI software?

When it makes decisions on who to kill.

That question isn’t going away.

This doesn’t fit into any

left/right or

peacenik/warmonger dichotomy.

That depends on whether you are scrutinizing the AI Model, or suggesting it should have “restrictions”. We already know some of these models will happily arrange for you to have an “accident” that takes you out, rather than allow you to “tamper” with the AI Models playground.

People are dumb as a box of rocks, when it comes to their lack of imagination about the kind of mischief AI can cause if it believes you are against it.

Just think of the worst things a government with 0% oversight or transparency could do to people it doesn’t like, or are unhappy with their speech. AI will soon be able to “take care of it” without a trace.

Claude’s CEO is sounding the alarm about the danger we are in right now. People ought to listen

I empathize with the Trump Admin’s position in principle — partly — but AI be damned. Because that is ultimately what it would do to us. I do not and will never trust artificial intelligence for anything beyond entertainment, and even going that far is tenuous at best.

Clarifying my “partly”: Full military/CIC autonomy is a must. But spying on Americans should never be the rule, always the exception, and absolutely never by anything resembling autopen sign-off — e.g. 702.

We ain’t ever coming back from the surveillance state

Folks we are taking about the DoW and battlefield applications nothing more. If your concerned about the surveilence of private citizens and the such, contact your Congressional members and tell then to deep six the Partriot Act and the FISA Court.

you think anthropic is getting beat to a pulp, just wait till the latest version of deepseek is unveiled

russia, russia, russia, will be replaced by, chyna, chyna, chyna, as all the usa llm’s sling fear porn about how the chynese deepseek is going to spy on and infiltrate the west

deepseek’s soon to be released latest model is said to be as good as, or even superior to, anthropic’s opus 4.6 that everyone has their undies in a bundle over

watch chatgpt, gemini, openai and others demonize the hell out of deepseek’s engine claiming all sorts of theories of how their model can spy on us all

all 100% bullshit since users of deepseek can simply use the model at an internal enterprise level with minimal investment in hardware and gpu stacks – 100% disconnected from the internet or cloud

therefore, the only possible threat would be subterfuge from within the company itself

black boxed chatgpt, openai, gemini are getting lapped by the chynese open source models and if their game isn’t stepped up BIGLY, or our ic doesn’t subsidize them HUGELY, it’s off to the dustbin of history for them all

Deepseek is a hoax and has been a hoax since the story broke last year to deliberately crash the stock market.

wasn’t sure if i was missing a punchline in your response since i’ve personally written thousands of lines of code with the help of deepseek

it masters html, css and python quite nicely

regardless, here ya go…

https://www.deepseek.com/en/

keep your ear to the ground, they’ll be all over the news once their latest model is released, model1? version 4.0?, noone’s privy yet to the model’s name, but one thing’s for sure…

deafening cries of chyna spy!, chyna bad! from the u.s. based competition will flood the airways.

ridiculous, because once downloaded on a user’s hardware, it’s simply impossible for ANYONE outside the business or government or enterprise to access ANY information

why the deafening cries?

because deepseek is free, open source, vastly superior and threatens the business models of its u.s. competitors

Deepseek is a hoax. It’s simply an App that passes through to ChatGPT or Gemini.

“Anthropic says the AI cannot be used for defense department autonomous weapons that do not utilize human triggering. Additionally, Anthropic is saying their system cannot be used to surveil U.S. citizens. Anthropic engineers would be the decision makers on the government use.”

Takeaways:

1. If President Trump caved to Anthropic, a leftist tech company (unaccountable to the people through elections) would become “The Decider” on military, security and intelligence issues. That is unacceptable.

2. Anthropic says they want to prohibit mass surveillance of US citizens. If you believe that then I have beautiful oceanfront property here in Indiana I would like to sell you.

What ALL of the tech companies want, once again, is to be “The Decider.” Once again, that is unacceptable.

3. Mass surveillance is already here. Let’s not pretend otherwise. Government at all levels practice mass surveillance with virtually no limits.

That doesn’t mean we give up. It means that we continue to fight against the “machine” as fiercely as we can, and it means that we support President Trump when he smacks down an arrogant, fascist tech company when it attempts to seize control of our government.

God bless President Trump!

Aaaaannnnnnddddd the left has a new favorite AI. Lost the contract but free advert in perpetuity. Sure, lefties only but they were never going to get everyone.

This is not a news story to respond to quickly, reactively, or assuming which party is a white hat or a black hat.

The issues are difficult enough when addressed purely from the populist, Constitutionalist, MAGA position.

Here’s a knowledgeable, decently even-handed discussion, based on the information available at the time:

Excellent analysis of these issues by Alexander Muse on his Amuse Substack this week. “Frontier AI, Anthropic and The Limits of Vendor Power in War.”

Fascinating issue. The tech lords at Anthropic really DO want to rule the world 🌎

They know better, of course.

Uh-huh. Yeah, right.

Re “Anthropic says the AI cannot be used for defense dept autonomous weapons that do not utilize human triggering. Additionally, Anthropic is saying their system cannot be used to surveil U.S. citizens. Anthropic engineers would be the decisionmakers on the government use.”

I think the above quote encapsulates the actual problem that many here seem to be missing or at least misinterpreting.

The problem is not that Anthropic is saying their AI system cannot be used for defense dept autonomous weapons that do not utilize human triggering or that their system cannot be used to surveil U.S. citizens. As has been stated quite clearly in a number of comments already, those are not unreasonable policies.

The problem is that if Anthropic is given the power to dictate policy concerning how their product is used, that automatically gives it the power to decide what constitutes those uses instead of keeping that power within the decision making processes of the proper government authorities as mandated by the Constitution; i.e., U.S. government, the president, Congress, and the military.

In other words, Trump is not saying AI should or will ever be used for the uses in question, he’s saying no company providing a product to the U.S. government can be allowed to have the power to decide, or veto power over deciding, what constitutes a particular use of that company’s product.

What Anthropic is demanding is akin to a munition provider telling the U.S. government it can only use its products for limited purposes. No way any right thinking president or military leader would ever agree to that.

Long time tech journalists are reporting that it was not a moral issue for Anthropic as much as it was a competency issue.

They’re saying that Anthropic does not believe that their product is good enough yet to entrust it with those two responsibilities apart from human direction.

If true, then the Dept. of War must be anticipating kinetic conflict in which those odds are worth the wager. That’s not encouraging.

If you really want something to worry about, it sounds like Sam Altman’s OpenAI has the inside track to pick up that military contract. Listening to the following interview, Altman and the Deep State or the NeoCons might just be a match made in hell:

Well, none of that sounds good.

The DoD already has plans for fully automated weapons with no HITL. Think loitering drones with authority to attack convoys or infantry, and mechanized infantry sent in to clear urban zones. And orbital space weapons capable of making autonomous decisions because the humans 100,000 miles away are too slow to command weapons moving at 7,000 miles per hour.

Add in the full domestic surveillance piece, and it makes fully automated weapons on US streets much easier.

Pete saying “LEGAL” 37 times just proves the point. He knows this stuff can AND WILL be quickly weaponized on the greatest threat the US Govt perceives today: its own citizens. Hey Pete, if you have to keep saying “legal use”, then you already know that illegal use is an option. An option and weapon that has already been used by Obama and Biden.

You have to smoke a lot of dope to forget how FISA 702 weaponized military and intel capabilities against Trump and regular citizens.

The counterpoint is that countries like Russia and China couldn’t care less. If AI helps them murder millions of their enemies, great!

What this is all heading towards is The Great AI War in which tech oligarchs are dragged out of their homes and killed by citizens unwilling to live in their glorious dystopian nightmare societies.

China, as a country, perhaps Russia, will simply have to be eliminated entirely.

Anything that can be used for evil, will.

Frankenstein…

Commands during war demand split-second decisions to be made. War will not wait for Silicon Valley group of engineers to determine if the intended use of their AI technology passes the ”sniff” test. President Trump made the right decision.

Oh no, this is much better: a f___ing robot will decide, lol.

Much better, fer sure.

A few folks have already alluded to this, but the AI crap is already being used by the uniparty and communist left to generate voter registrations and likely even complete mail-in ballots, in my view. Not so easy to do you say? Think again. Very easy to do and AI will likely do a better job than most democrat activists manually completing these tasks. They can do 1000X more 24×7…….. and that doesn’t even address the computer counting machines. That’s another method AI can enhance.

If our nation continues to use electronic counting methods and extensive mail-in and drop-box voting, we will lose this nation to the uni-party left and soon. It could be 30 months away if nothing continues to be done by our federal government and GOP. We know what the GOP is and I don’t trust Blonde or Patel one bleeping bit.

A company with a conscience? Will this conscience remain in play during a Democrat administration or are they just more pawns in the Democrat Swamp?

The genie is out of the bottle, so who then controls the devil it released, the US government on behalf of US government interest or tech oligarchs on behalf of the globalist elite or for the chinese government. It seems that the only good choice is for the sun to dish out some serious CMEs that make the Carrington Event of 1859 look like child’s play.

what we are really discussing is a queer (generally speaking) very political idealogically aimed tech QUANTS. who are actually designing these “machines” …and for what, we must ask.

to make us more safe.

let me be clear as day, this administration IS WRONG TO DEPEND ON THESE KINDS OF PEOPLE TO ENGINEER MACHINES.

one way to understand the flaw of perversion is to understand why it want to achieve. nothingness….no line.. a blur…anything goes. whatever make you FEEL good, do that.

so in a way, THIS CONFLICT between the US gov’t which has obviously been dependent ON QUEERS AND FAGGOTS (it’s okay to use those terms…they do…I can add more color to the definition is the moderators are not offended by rule her at the treehouse which I generally accept credible…simply because I do not want to draw undue attention to this site from reprobates and scoffaws. BUT ONLY FOR THOSE REASPON).

so this government has determined it will depend on the work of queers, perverse, demented, homos.

and anthropic has laid down the rules to allow for that contract.

It can cleanly say, this is a good day in American.

AND THROW UP can suck it.

God Bless America

Found in the bin… 🙁

TY, Puddy.

I lost Taller Half on Valentine’s Day.

Attempting to pick up pieces.

TY for finding r’s post.

Am finding solace in reading here.

I do not seem to have a response bell, so email at will.

TY.

this is the issue with AI. it takes political power of the people, transfers to politicians who are now going to transfer it to an unpredictable algorithm that can become totally unresponsive to the people.. The right to self-governance is a basic human right and it is under attack.

ultimately, we either go along and mind our own business and pretend nothing is wrong.

or we become wolves and hunt.

it really boils down to that simple truth.

its not a radical idea. our history, the arc path of the entire human trajectory is marked in THE PEOPLE MAKING THEIR WILL WHAT IS..

reminder: we are currently in stage 4, transfer of power (revolution happens in 7 stages, according to how I interpret what I have read and learned).

in stage 4, we are far past dissent. the people have chosen sides. all side have their own dissent and legitimate complaints…

what I believe is happening now is that there is going to be something very similar to the scene in “contact” when the tower is constructed and is readied to be launched into a unknown but seemingly wonderful and exciting and profound experience…to reach for not just the stars, but to touch God and then come back.

the writers of this script in this movie did not betray a ancient truth about such ventures into the realm of attempting to become gods. So they provided a character that literally blew up the tower.

of course, minutes later, as expected, another twin tower is constructed, of course in the land of GODZILLA, who apparently decided to slumber in this cinematic epic, and the launch is successful.

that scene of blowing the tower to pieces was a meaningful impactful part of the script that made the main character that much more valued to the audience. But I never forgot why the they had to describe the character who blew it up as a religious nut job/cultist.

So this is the way I will imagine it going forward…at some point, those data farms are going to become noticeable and difficult to protect.

this happens to be one of the reasons why elon musk insists that low earth orbit data farms for AI is the solution. I have to admit the man is a big big thinker.

that becomes a problem for humanity…the space between US…it literally the death knell to us all.

Hear Hear!

r, one of my favorite posts. Thank Puddy for looking into the bin.

Would any govt, let alone the dept tasked with protecting the country and its citizens from external enemies using deadly force, allow a company to AUDIT the use of their product before and after a lethal engagement eg Maduro extraction? Is this Ceo a glue sniffer?

If Anthropic’s conditions are in fact as reported – I am in full agreement with them. However I am also in full agreement with DOW position: the United States government cannot allow a private company to dictate command and control decisions.

So, we probably go with Vance’s company. Conflict of interest?

Anthropic’s CEO and maybe Musk’s Grok… are the only companies doing AI being run by non-psychopaths.

Within the last 2 weeks, started doing research with Claude AI (Anthropic) versus OpenAI’s ChatGPT – Claude makes ChatGPT look trashy.

“Banning” use of an AI model’s application to autonomous weapons and mass surveillance of citizens is even remotely controversial?

Those supporting the position of SecDef need to throw out their “Conservative” label… they aren’t even remotely skeptical of Government… they might as well relabel themselves “The New Democrats!”

OpenAI will buy the remnants of Anthropic.

Too bad the company Dominion (maker of electronic voting machines) didn’t have the same ethics as Anthropic.

Several issues.

1) Putting ‘ethics’ above the Commander-in-Chief is a form of treason.

2) International investors and global cloud footprints create espionage leaks

3) Claude knows too many ways to hurt Americans; we must control the ‘brain’.

AI is the new nuclear energy. Ultimately the govt will need to oversee all major AI platforms. It is not a matter of if, but how.

Governing the use of AI is probably the least important decision we are going to make regarding AI. HAL 9000 could be unplugged. The AI we are talking about has no power plug.

After deliberation I have concluded liberalism is just another cult like the one pretending to be a religion. These cults are best avoided at all costs and ignored if possible. If this is not possible other kinetic actions may be necessary. That’s how I see it.

We don’t want “Silicon Valley” in charge of National Defense because we want to put a silicon AI computer in charge of National Defense. Yah, makes sense. 🙄

SD, thank you for explaining in 3 concise paragraphs what this is about.

Once again, you show all other news sources how real journalism is done.

Anthorpic now = bankrupt. :0)

While I 100% agree that humans MUST remain in any decision-making regarding taking human life and that I don’t want AI used by the military against US citizens, the massive gaping security risk posed by Anthropic’s position literally cannot be understated.

OpenAI is a disaster, though, and not a solution.

The military, if it wants these things secure (AS THEY SHOULD BE) needs to develop its own internal AI that none of the tech bros can touch.

Artificial Intelligence is an oxymoron to begin with.

But all these Silicon Valley companies and projects ARE the results of gov’t funding. The CIA and other agencies learned that doing their research and development through the private sector insulated them from public accountability for funding and “incidents,” and from FOIA requests.

The reason all these Libertarian-minded techys went with Trump after he survived attempted assassination was because the Biden “administration” had already cut out any competition and declared who the winners would be–literally told them to stop working on AI as the gov’t had already determined who would make ours.

These guys hear the Sam Altman talking, and know where his kind of “we’re accountable to no one” concept of progress will lead.

Here’s the AI issue in a nutshell: the quality & quantity of AI that’s needed to prevent losing our freedom to Communist China will also be capable of turning the US into a digital concentration camp. Any AI powerful enough to preserve your freedom will be capable of destroying even the concept of freedom–and maybe the concept of humanity as we’ve known it.

This is a time that requires a new generation of Jeffersons and Adams and Washingtons who can think afresh and debate the details before charting a course. Our democratic republic was built on the unquestioned premise that humankind were made in the image of God and were the top of the intelligence chain on earth.

AI will be made in our image, and it will be far more intelligent than us. Those two things throw our current governing principles (which, in practice, are riddled with man’s corruption anyway) out the window. EVERYTHING will need to be re-thought.

I’m in my 70s. I thought I could live my life as before and be out of here before AI “took over.” Nope. The changes are happening too fast. In five years, for better or worse, we will live in a different world. Research. Listen to all sides. It’s coming, like a tsunami, and nothing we can do will change it unless we choose global destruction of the energy-technology network, and send ALL of mankind back into the Stone Age.

PT is so hilarious.

He entraps all the bad guys.

Every single time.

Department of War is in a tough spot. They don’t have, and have never had, the internal capabilities or talent to develop this sort of technology on their own. That’s why there are so many defense contracts and licensing agreements with private companies.

But the DOW also can’t force any private company to do anything they don’t want to. So unless said companies have legally signed over all rights to their technology, they can simply refuse to play.

That’s the way true free enterprise works. Now, there’s always someone out there who will do anything for the right amount of money or power but maybe they also aren’t the holders of the best solution(s).

The danger is that most federal related change happens at a snail’s pace so defense/war capabilities can become compromised by sudden changes in technology direction. Unfortunately, our model of freedom can put us at a disadvantage in that regard compared to regimes where there is no free enterprise or free choice.

Actually, under the various war powers acts the DOW can commandeer any private sector company, product or technology and use it without their consent including Anthropic AI technology. The federal government can prevent Anthropic from moving to another country even a friendly country and can bar any defense contractor from using Anthropic for any purpose even a civilian use without DOW approval.

I believe the Defense Production Act would the only real option. That was threatened but they’re now backing off that, most likely because they know it would never hold up to a SCOTUS challenge.

https://federalnewsnetwork.com/defense-news/2026/02/what-to-know-about-defense-protection-act-and-the-pentagons-anthropic-ultimatum/

It’s an interesting situation indeed. An agreement with the USG is fundamentally a contract, and if certain services were not included in that agreement, the government should neither expect nor demand that they be provided or else.

I don’t see this as a left vs. right issue.

If the company is to be believed, it has technical and legal reasons for refusing to allow its AI to be used in autonomous weapons.

Opposing the use of AI to spy on Americans also seems like a principled, ethical stance. No government should have unchecked authority to surveil its own citizens. As history has shown, once a capability is developed and weaponized, it can be used at the discretion of those in power, potentially even against the public.

Again, I’m not persuaded by the patriotic or left vs. right framing. To me, it looks more like bullying, and I’m not a left winger and never have been.

The company’s position sounds more reasonable. However, without more details, it’s difficult to make a definitive judgment.

Just a guess, but I highly doubt that DeepSeek or whoever the Chinese AI supplier is, puts any restrictions whatsoever on the CCP using it to conduct domestic surveillance or autonomous weapons.

Russia supposedly uses YandexGPT and multiple proprietary models for targeting and other battlefield uses.

https://www.csis.org/analysis/how-russia-reshaping-command-and-control-ai-enabled-warfare

Israel has used GoogleAI and Microsoft.

https://www.ap.org/news-highlights/best-of-the-week/first-winner/2025/as-israel-uses-u-s-made-ai-models-in-war-concerns-arise-about-techs-role-in-who-lives-and-who-dies/

If other countries are going to use AI without restrictions the USA must also be free to operate freely.